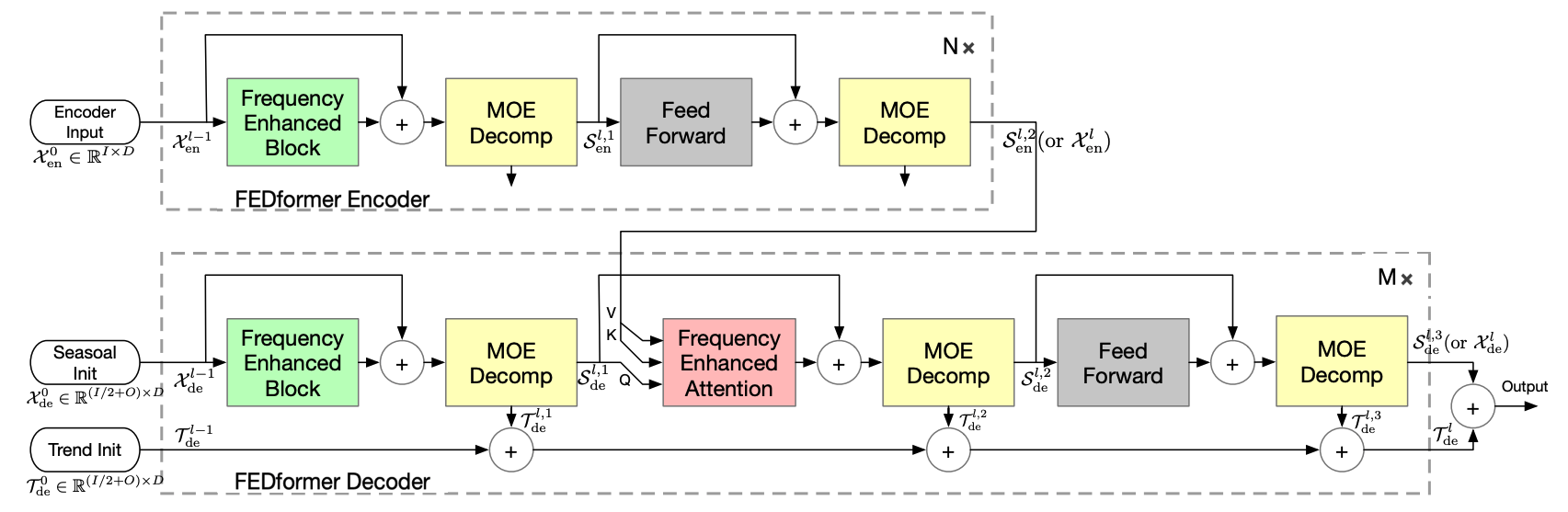

The FEDformer model tackles the challenge of finding reliable dependencies on intricate temporal patterns of long-horizon forecasting. The architecture has the following distinctive features:Documentation Index

Fetch the complete documentation index at: https://nixtlaverse.nixtla.io/llms.txt

Use this file to discover all available pages before exploring further.

- In-built progressive decomposition in trend and seasonal components based on a moving average filter.

- Frequency Enhanced Block and Frequency Enhanced Attention to perform attention in the sparse representation on basis such as Fourier transform.

- Classic encoder-decoder proposed by Vaswani et al. (2017) with a multi-head attention mechanism.

- It employs encoded autoregressive features obtained from a convolution network.

- Absolute positional embeddings obtained from calendar features are utilized.

1. FEDformer

FEDformer

BaseModel

FEDformer

The FEDformer model tackles the challenge of finding reliable dependencies on intricate temporal patterns of long-horizon forecasting.

The architecture has the following distinctive features:

- In-built progressive decomposition in trend and seasonal components based on a moving average filter.

- Frequency Enhanced Block and Frequency Enhanced Attention to perform attention in the sparse representation on basis such as Fourier transform.

- Classic encoder-decoder proposed by Vaswani et al. (2017) with a multi-head attention mechanism.

- It employs encoded autoregressive features obtained from a convolution network.

- Absolute positional embeddings obtained from calendar features are utilized.

| Name | Type | Description | Default |

|---|---|---|---|

h | int | forecast horizon. | required |

input_size | int | maximum sequence length for truncated train backpropagation. | required |

stat_exog_list | List[str] | static exogenous columns. | None |

hist_exog_list | List[str] | historic exogenous columns. | None |

futr_exog_list | List[str] | future exogenous columns. | None |

decoder_input_size_multiplier | float | multiplier for the input size of the decoder. | 0.5 |

version | str | version of the model. | ‘Fourier’ |

modes | int | number of modes for the Fourier block. | 64 |

mode_select | str | method to select the modes for the Fourier block. | ‘random’ |

hidden_size | int | units of embeddings and encoders. | 128 |

dropout | float | dropout throughout Autoformer architecture. | 0.05 |

n_head | int | controls number of multi-head’s attention. | 8 |

conv_hidden_size | int | channels of the convolutional encoder. | 32 |

activation | str | activation from [‘ReLU’, ‘Softplus’, ‘Tanh’, ‘SELU’, ‘LeakyReLU’, ‘PReLU’, ‘Sigmoid’, ‘GELU’]. | ‘gelu’ |

encoder_layers | int | number of layers for the TCN encoder. | 2 |

decoder_layers | int | number of layers for the MLP decoder. | 1 |

MovingAvg_window | int | window size for the moving average filter. | 25 |

loss | PyTorch module | instantiated train loss class from losses collection. | MAE() |

valid_loss | PyTorch module | instantiated validation loss class from losses collection. | None |

max_steps | int | maximum number of training steps. | 5000 |

learning_rate | float | Learning rate between (0, 1). | 0.0001 |

num_lr_decays | int | Number of learning rate decays, evenly distributed across max_steps. | -1 |

early_stop_patience_steps | int | Number of validation iterations before early stopping. | -1 |

val_monitor | str | metric to monitor for early stopping. Valid options: “ptl/val_loss”, “valid_loss”, “train_loss”. Default: “ptl/val_loss”. | ‘ptl/val_loss’ |

val_check_steps | int | Number of training steps between every validation loss check. | 100 |

batch_size | int | number of different series in each batch. | 32 |

valid_batch_size | int | number of different series in each validation and test batch, if None uses batch_size. | None |

windows_batch_size | int | number of windows to sample in each training batch, default uses all. | 1024 |

inference_windows_batch_size | int | number of windows to sample in each inference batch. | 1024 |

start_padding_enabled | bool | if True, the model will pad the time series with zeros at the beginning, by input size. | False |

training_data_availability_threshold | Union[float, List[float]] | minimum fraction of valid data points required for training windows. Single float applies to both insample and outsample; list of two floats specifies [insample_fraction, outsample_fraction]. Default 0.0 allows windows with only 1 valid data point (current behavior). | 0.0 |

step_size | int | step size between each window of temporal data. | 1 |

scaler_type | str | type of scaler for temporal inputs normalization see temporal scalers. | ‘identity’ |

random_seed | int | random_seed for pytorch initializer and numpy generators. | 1 |

drop_last_loader | bool | if True TimeSeriesDataLoader drops last non-full batch. | False |

alias | str | optional, Custom name of the model. | None |

optimizer | Subclass of ‘torch.optim.Optimizer’ | optional, user specified optimizer instead of the default choice (Adam). | None |

optimizer_kwargs | dict | optional, list of parameters used by the user specified optimizer. | None |

lr_scheduler | Subclass of ‘torch.optim.lr_scheduler.LRScheduler’ | optional, user specified lr_scheduler instead of the default choice (StepLR). | None |

lr_scheduler_kwargs | dict | optional, list of parameters used by the user specified lr_scheduler. | None |

dataloader_kwargs | dict | optional, list of parameters passed into the PyTorch Lightning dataloader by the TimeSeriesDataLoader. | None |

**trainer_kwargs | int | keyword trainer arguments inherited from PyTorch Lighning’s trainer. |

FEDformer.fit

fit method, optimizes the neural network’s weights using the

initialization parameters (learning_rate, windows_batch_size, …)

and the loss function as defined during the initialization.

Within fit we use a PyTorch Lightning Trainer that

inherits the initialization’s self.trainer_kwargs, to customize

its inputs, see PL’s trainer arguments.

The method is designed to be compatible with SKLearn-like classes

and in particular to be compatible with the StatsForecast library.

By default the model is not saving training checkpoints to protect

disk memory, to get them change enable_checkpointing=True in __init__.

Parameters:

| Name | Type | Description | Default |

|---|---|---|---|

dataset | TimeSeriesDataset | NeuralForecast’s TimeSeriesDataset, see documentation. | required |

val_size | int | Validation size for temporal cross-validation. | 0 |

random_seed | int | Random seed for pytorch initializer and numpy generators, overwrites model.init’s. | None |

test_size | int | Test size for temporal cross-validation. | 0 |

| Type | Description |

|---|---|

| None |

FEDformer.predict

Trainer execution of predict_step.

Parameters:

| Name | Type | Description | Default |

|---|---|---|---|

dataset | TimeSeriesDataset | NeuralForecast’s TimeSeriesDataset, see documentation. | required |

test_size | int | Test size for temporal cross-validation. | None |

step_size | int | Step size between each window. | 1 |

random_seed | int | Random seed for pytorch initializer and numpy generators, overwrites model.init’s. | None |

quantiles | list | Target quantiles to predict. | None |

h | int | Prediction horizon, if None, uses the model’s fitted horizon. Defaults to None. | None |

explainer_config | dict | configuration for explanations. | None |

**data_module_kwargs | dict | PL’s TimeSeriesDataModule args, see documentation. |

| Type | Description |

|---|---|

| None |

Usage Example

2. Auxiliary functions

AutoCorrelationLayer

Module

Auto Correlation Layer

LayerNorm

Module

Special designed layernorm for the seasonal part

Decoder

Module

FEDformer decoder

DecoderLayer

Module

FEDformer decoder layer with the progressive decomposition architecture

Encoder

Module

FEDformer encoder

EncoderLayer

Module

FEDformer encoder layer with the progressive decomposition architecture

FourierCrossAttention

Module

Fourier Cross Attention layer

FourierBlock

Module

Fourier block