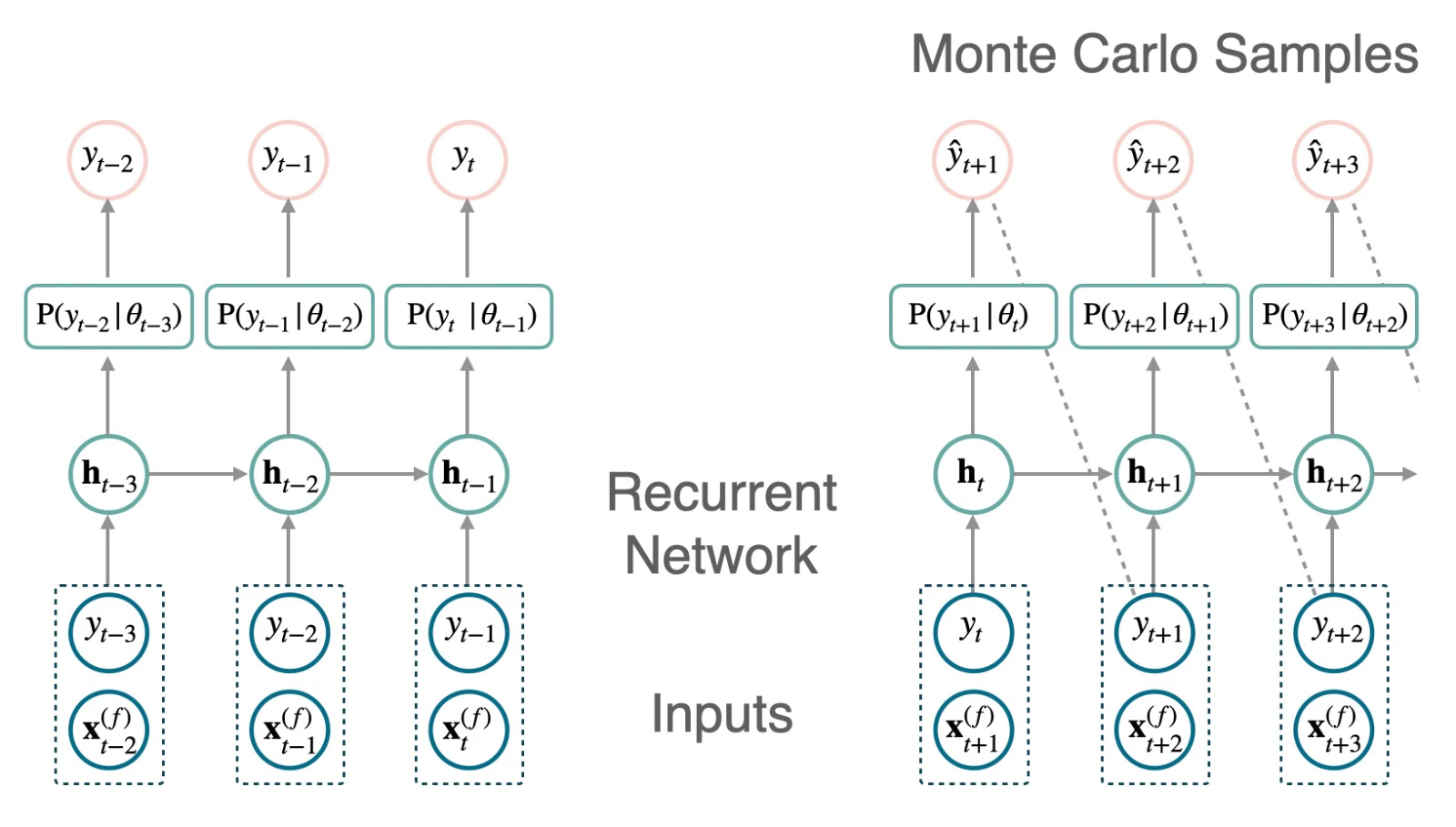

The DeepAR model produces probabilistic forecasts based on an autoregressive recurrent neural network optimized on panel data using cross-learning. DeepAR obtains its forecast distribution uses a Markov Chain Monte Carlo sampler with the following conditional probability: where are static exogenous inputs, are future exogenous available at the time of the prediction. The predictions are obtained by transforming the hidden states into predictive distribution parameters , and then generating samples through Monte Carlo sampling trajectories. ReferencesDocumentation Index

Fetch the complete documentation index at: https://nixtlaverse.nixtla.io/llms.txt

Use this file to discover all available pages before exploring further.

- David Salinas, Valentin Flunkert, Jan Gasthaus, Tim Januschowski (2020). “DeepAR: Probabilistic forecasting with autoregressive recurrent networks”. International Journal of Forecasting.

- Alexander Alexandrov et. al (2020). “GluonTS: Probabilistic and Neural Time Series Modeling in Python”. Journal of Machine Learning Research.

Exogenous Variables, Losses, and Parameters Availability Given the sampling procedure during inference, DeepAR only supportsDistributionLossas training loss. Note that DeepAR generates a non-parametric forecast distribution using Monte Carlo. We use this sampling procedure also during validation to make it closer to the inference procedure. Therefore, only theMQLossis available for validation. Aditionally, Monte Carlo implies that historic exogenous variables are not available for the model.

1. DeepAR

DeepAR

BaseModel

DeepAR

Parameters:

| Name | Type | Description | Default |

|---|---|---|---|

h | int | Forecast horizon. | required |

input_size | int | maximum sequence length for truncated train backpropagation. Default -1 uses 3 * horizon | -1 |

h_train | int | maximum sequence length for truncated train backpropagation. Default 1. | 1 |

lstm_n_layers | int | number of LSTM layers. | 2 |

lstm_hidden_size | int | LSTM hidden size. | 128 |

lstm_dropout | float | LSTM dropout. | 0.1 |

decoder_hidden_layers | int | number of decoder MLP hidden layers. Default: 0 for linear layer. | 0 |

decoder_hidden_size | int | decoder MLP hidden size. Default: 0 for linear layer. | 0 |

trajectory_samples | int | number of Monte Carlo trajectories during inference. | 100 |

stat_exog_list | str list | static exogenous columns. | None |

hist_exog_list | str list | historic exogenous columns. | None |

futr_exog_list | str list | future exogenous columns. | None |

exclude_insample_y | bool | the model skips the autoregressive features y[t-input_size:t] if True. | False |

loss | PyTorch module | instantiated train loss class from losses collection. | DistributionLoss(distribution=‘StudentT’, level=[80, 90], return_params=False) |

valid_loss | PyTorch module | instantiated valid loss class from losses collection. | MAE() |

max_steps | int | maximum number of training steps. | 1000 |

learning_rate | float | Learning rate between (0, 1). | 0.001 |

num_lr_decays | int | Number of learning rate decays, evenly distributed across max_steps. | 3 |

early_stop_patience_steps | int | Number of validation iterations before early stopping. | -1 |

val_monitor | str | metric to monitor for early stopping. Valid options: “ptl/val_loss”, “valid_loss”, “train_loss”. Default: “ptl/val_loss”. | ‘ptl/val_loss’ |

val_check_steps | int | Number of training steps between every validation loss check. | 100 |

batch_size | int | number of different series in each batch. | 32 |

valid_batch_size | int | number of different series in each validation and test batch, if None uses batch_size. | None |

windows_batch_size | int | number of windows to sample in each training batch, default uses all. | 1024 |

inference_windows_batch_size | int | number of windows to sample in each inference batch, -1 uses all. | -1 |

start_padding_enabled | bool | if True, the model will pad the time series with zeros at the beginning, by input size. | False |

training_data_availability_threshold | Union[float, List[float]] | minimum fraction of valid data points required for training windows. Single float applies to both insample and outsample; list of two floats specifies [insample_fraction, outsample_fraction]. Default 0.0 allows windows with only 1 valid data point (current behavior). | 0.0 |

step_size | int | step size between each window of temporal data. | 1 |

scaler_type | str | type of scaler for temporal inputs normalization see temporal scalers. | ‘identity’ |

random_seed | int | random_seed for pytorch initializer and numpy generators. | 1 |

drop_last_loader | bool | if True TimeSeriesDataLoader drops last non-full batch. | False |

alias | str | optional, Custom name of the model. | None |

optimizer | Subclass of ‘torch.optim.Optimizer’ | optional, user specified optimizer instead of the default choice (Adam). | None |

optimizer_kwargs | dict | optional, list of parameters used by the user specified optimizer. | None |

lr_scheduler | Subclass of ‘torch.optim.lr_scheduler.LRScheduler’ | optional, user specified lr_scheduler instead of the default choice (StepLR). | None |

lr_scheduler_kwargs | dict | optional, list of parameters used by the user specified lr_scheduler. | None |

dataloader_kwargs | dict | optional, list of parameters passed into the PyTorch Lightning dataloader by the TimeSeriesDataLoader. | None |

**trainer_kwargs | int | keyword trainer arguments inherited from PyTorch Lighning’s trainer. |

DeepAR.fit

fit method, optimizes the neural network’s weights using the

initialization parameters (learning_rate, windows_batch_size, …)

and the loss function as defined during the initialization.

Within fit we use a PyTorch Lightning Trainer that

inherits the initialization’s self.trainer_kwargs, to customize

its inputs, see PL’s trainer arguments.

The method is designed to be compatible with SKLearn-like classes

and in particular to be compatible with the StatsForecast library.

By default the model is not saving training checkpoints to protect

disk memory, to get them change enable_checkpointing=True in __init__.

Parameters:

| Name | Type | Description | Default |

|---|---|---|---|

dataset | TimeSeriesDataset | NeuralForecast’s TimeSeriesDataset, see documentation. | required |

val_size | int | Validation size for temporal cross-validation. | 0 |

random_seed | int | Random seed for pytorch initializer and numpy generators, overwrites model.init’s. | None |

test_size | int | Test size for temporal cross-validation. | 0 |

| Type | Description |

|---|---|

| None |

DeepAR.predict

Trainer execution of predict_step.

Parameters:

| Name | Type | Description | Default |

|---|---|---|---|

dataset | TimeSeriesDataset | NeuralForecast’s TimeSeriesDataset, see documentation. | required |

test_size | int | Test size for temporal cross-validation. | None |

step_size | int | Step size between each window. | 1 |

random_seed | int | Random seed for pytorch initializer and numpy generators, overwrites model.init’s. | None |

quantiles | list | Target quantiles to predict. | None |

h | int | Prediction horizon, if None, uses the model’s fitted horizon. Defaults to None. | None |

explainer_config | dict | configuration for explanations. | None |

**data_module_kwargs | dict | PL’s TimeSeriesDataModule args, see documentation. |

| Type | Description |

|---|---|

| None |