Long-horizon forecasting is challenging because of the volatility of the predictions and the computational complexity. To solve this problem we created the Neural Hierarchical Interpolation for Time Series (NHITS).Documentation Index

Fetch the complete documentation index at: https://nixtlaverse.nixtla.io/llms.txt

Use this file to discover all available pages before exploring further.

NHITS

builds upon

NBEATS

and specializes its partial outputs in the different frequencies of the

time series through hierarchical interpolation and multi-rate input

processing. On the long-horizon forecasting task

NHITS

improved accuracy by 25% on AAAI’s best paper award the

Informer,

while being 50x faster.

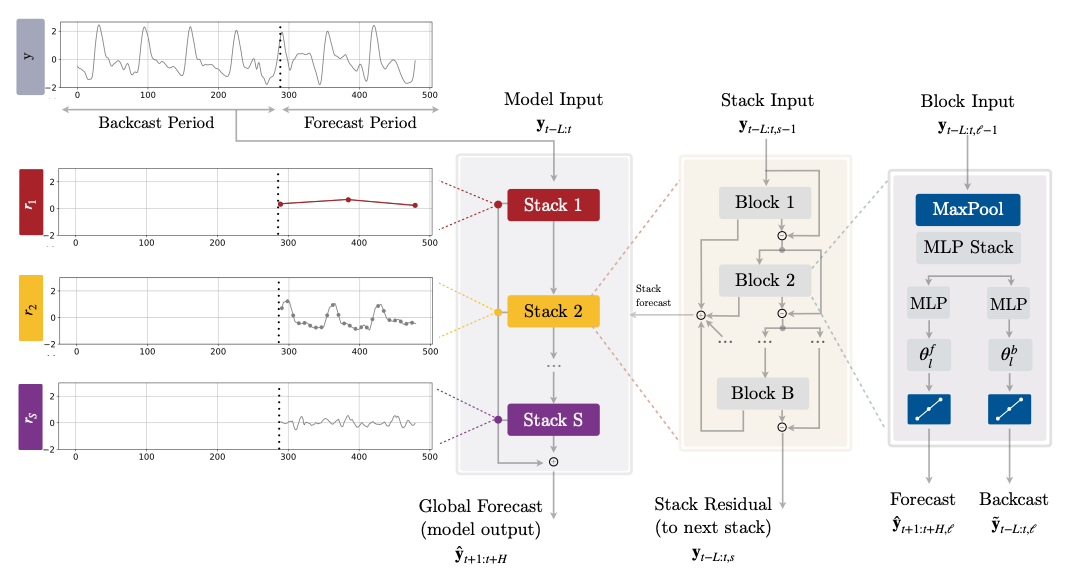

The model is composed of several MLPs with ReLU non-linearities. Blocks

are connected via doubly residual stacking principle with the backcast

and forecast

outputs of the l-th block. Multi-rate

input pooling, hierarchical interpolation and backcast residual

connections together induce the specialization of the additive

predictions in different signal bands, reducing memory footprint and

computational time, thus improving the architecture parsimony and

accuracy.

References

- Boris N. Oreshkin, Dmitri Carpov, Nicolas Chapados, Yoshua Bengio (2019). “N-BEATS: Neural basis expansion analysis for interpretable time series forecasting”.

- Cristian Challu, Kin G. Olivares, Boris N. Oreshkin, Federico Garza, Max Mergenthaler-Canseco, Artur Dubrawski (2023). “NHITS: Neural Hierarchical Interpolation for Time Series Forecasting”. Accepted at the Thirty-Seventh AAAI Conference on Artificial Intelligence.

- Zhou, H.; Zhang, S.; Peng, J.; Zhang, S.; Li, J.; Xiong, H.; and Zhang, W. (2020). “Informer: Beyond Efficient Transformer for Long Sequence Time-Series Forecasting”. Association for the Advancement of Artificial Intelligence Conference 2021 (AAAI 2021).

NHITS

NHITS

BaseModel

NHITS

The Neural Hierarchical Interpolation for Time Series (NHITS), is an MLP-based deep

neural architecture with backward and forward residual links. NHITS tackles volatility and

memory complexity challenges, by locally specializing its sequential predictions into

the signals frequencies with hierarchical interpolation and pooling.

Parameters:

| Name | Type | Description | Default |

|---|---|---|---|

h | int | Forecast horizon. | required |

input_size | int | autorregresive inputs size, y=[1,2,3,4] input_size=2 -> y_[t-2:t]=[1,2]. | required |

futr_exog_list | str list | future exogenous columns. | None |

hist_exog_list | str list | historic exogenous columns. | None |

stat_exog_list | str list | static exogenous columns. | None |

exclude_insample_y | bool | the model skips the autoregressive features y[t-input_size:t] if True. | False |

stack_types | List[str] | stacks list in the form N * [‘identity’], to be deprecated in favor of n_stacks. Note that len(stack_types)=len(n_freq_downsample)=len(n_pool_kernel_size). | [‘identity’, ‘identity’, ‘identity’] |

n_blocks | List[int] | Number of blocks for each stack. Note that len(n_blocks) = len(stack_types). | [1, 1, 1] |

mlp_units | List[List[int]] | Structure of hidden layers for each stack type. Each internal list should contain the number of units of each hidden layer. Note that len(n_hidden) = len(stack_types). | 3 * [[512, 512]] |

n_pool_kernel_size | List[int] | list with the size of the windows to take a max/avg over. Note that len(stack_types)=len(n_freq_downsample)=len(n_pool_kernel_size). | [2, 2, 1] |

n_freq_downsample | List[int] | list with the stack’s coefficients (inverse expressivity ratios). Note that len(stack_types)=len(n_freq_downsample)=len(n_pool_kernel_size). | [4, 2, 1] |

pooling_mode | str | input pooling module from [‘MaxPool1d’, ‘AvgPool1d’]. | ’MaxPool1d’ |

interpolation_mode | str | interpolation basis from [‘linear’, ‘nearest’, ‘cubic’]. | ‘linear’ |

dropout_prob_theta | float | Float between (0, 1). Dropout for NHITS basis. | 0.0 |

activation | str | activation from [‘ReLU’, ‘Softplus’, ‘Tanh’, ‘SELU’, ‘LeakyReLU’, ‘PReLU’, ‘Sigmoid’]. | ’ReLU’ |

learning_rate | float | Learning rate between (0, 1). | 0.001 |

num_lr_decays | int | Number of learning rate decays, evenly distributed across max_steps. | 3 |

early_stop_patience_steps | int | Number of validation iterations before early stopping. | -1 |

val_monitor | str | metric to monitor for early stopping. Valid options: “ptl/val_loss”, “valid_loss”, “train_loss”. Default: “ptl/val_loss”. | ‘ptl/val_loss’ |

val_check_steps | int | Number of training steps between every validation loss check. | 100 |

batch_size | int | number of different series in each batch. | 32 |

valid_batch_size | int | number of different series in each validation and test batch, if None uses batch_size. | None |

windows_batch_size | int | number of windows to sample in each training batch, default uses all. | 1024 |

inference_windows_batch_size | int | number of windows to sample in each inference batch, -1 uses all. | -1 |

start_padding_enabled | bool | if True, the model will pad the time series with zeros at the beginning, by input size. | False |

training_data_availability_threshold | Union[float, List[float]] | minimum fraction of valid data points required for training windows. Single float applies to both insample and outsample; list of two floats specifies [insample_fraction, outsample_fraction]. Default 0.0 allows windows with only 1 valid data point (current behavior). | 0.0 |

step_size | int | step size between each window of temporal data. | 1 |

scaler_type | str | type of scaler for temporal inputs normalization see temporal scalers. | ‘identity’ |

random_seed | int | random_seed for pytorch initializer and numpy generators. | 1 |

drop_last_loader | bool | if True TimeSeriesDataLoader drops last non-full batch. | False |

alias | str | optional, Custom name of the model. | None |

optimizer | Subclass of ‘torch.optim.Optimizer’ | optional, user specified optimizer instead of the default choice (Adam). | None |

optimizer_kwargs | dict | optional, list of parameters used by the user specified optimizer. | None |

lr_scheduler | Subclass of ‘torch.optim.lr_scheduler.LRScheduler’ | optional, user specified lr_scheduler instead of the default choice (StepLR). | None |

lr_scheduler_kwargs | dict | optional, list of parameters used by the user specified lr_scheduler. | None |

dataloader_kwargs | dict | optional, list of parameters passed into the PyTorch Lightning dataloader by the TimeSeriesDataLoader. | None |

**trainer_kwargs | int | keyword trainer arguments inherited from PyTorch Lighning’s trainer. |

NHITS.fit

fit method, optimizes the neural network’s weights using the

initialization parameters (learning_rate, windows_batch_size, …)

and the loss function as defined during the initialization.

Within fit we use a PyTorch Lightning Trainer that

inherits the initialization’s self.trainer_kwargs, to customize

its inputs, see PL’s trainer arguments.

The method is designed to be compatible with SKLearn-like classes

and in particular to be compatible with the StatsForecast library.

By default the model is not saving training checkpoints to protect

disk memory, to get them change enable_checkpointing=True in __init__.

Parameters:

| Name | Type | Description | Default |

|---|---|---|---|

dataset | TimeSeriesDataset | NeuralForecast’s TimeSeriesDataset, see documentation. | required |

val_size | int | Validation size for temporal cross-validation. | 0 |

random_seed | int | Random seed for pytorch initializer and numpy generators, overwrites model.init’s. | None |

test_size | int | Test size for temporal cross-validation. | 0 |

| Type | Description |

|---|---|

| None |

NHITS.predict

Trainer execution of predict_step.

Parameters:

| Name | Type | Description | Default |

|---|---|---|---|

dataset | TimeSeriesDataset | NeuralForecast’s TimeSeriesDataset, see documentation. | required |

test_size | int | Test size for temporal cross-validation. | None |

step_size | int | Step size between each window. | 1 |

random_seed | int | Random seed for pytorch initializer and numpy generators, overwrites model.init’s. | None |

quantiles | list | Target quantiles to predict. | None |

h | int | Prediction horizon, if None, uses the model’s fitted horizon. Defaults to None. | None |

explainer_config | dict | configuration for explanations. | None |

**data_module_kwargs | dict | PL’s TimeSeriesDataModule args, see documentation. |

| Type | Description |

|---|---|

| None |