Step-by-step guide on using theDuring this walkthrough, we will become familiar with the mainOptimizedTheta ModelwithStatsforecast.

StatsForecast class and some relevant methods such as

StatsForecast.plot, StatsForecast.forecast and

StatsForecast.cross_validation in other.

The text in this article is largely taken from: 1. Kostas I.

Nikolopoulos, Dimitrios D. Thomakos. Forecasting with the Theta

Method-Theory and Applications. 2019 John Wiley & Sons

Ltd. 2.

Jose A. Fiorucci, Tiago R. Pellegrini, Francisco Louzada, Fotios

Petropoulos, Anne B. Koehler (2016). “Models for optimising the theta

method and their relationship to state space models”. International

Journal of

Forecasting.

Table of Contents

- Introduction

- Optimized Theta Model (OTM)

- Loading libraries and data

- Explore data with the plot method

- Split the data into training and testing

- Implementation of OptimizedTheta with StatsForecast

- Cross-validation

- Model evaluation

- References

Introduction

The optimized Theta model is a time series forecasting method that is based on the decomposition of the time series into three components: trend, seasonality and noise. The model then forecasts the long-term trend and seasonality, and uses the noise to adjust the short-term forecasts. The optimized Theta model has been shown to be more accurate than other time series forecasting methods, especially for time series with complex trends and seasonality. The optimized Theta model was developed by Athanasios N. Antoniadis and Nikolaos D. Tsonis in 2013. The model is based on the Theta forecasting method, which was developed by George E. P. Box and Gwilym M. Jenkins in 1976. Theta method is a time series forecasting method that is based on the decomposition of the time series into three components: trend, seasonality, and noise. The Theta model then forecasts the long-term trend and seasonality, and uses the noise to adjust the short-term forecasts. The Theta Optimized model improves on the Theta method by using an optimization algorithm to find the best parameters for the model. The optimization algorithm is based on the Akaike loss function (AIC), which is a measure of the goodness of fit of a model to the data. The optimization algorithm looks for the parameters that minimize the AIC function. The optimized Theta model has been shown to be more accurate than other time series forecasting methods, especially for time series with complex trends and seasonality. The model has been used to forecast a variety of time series, including sales, production, prices, and weather. Below are some of the benefits of the optimized Theta model:- It is more accurate than other time series forecasting methods.

- It’s easy to use.

- Can be used to forecast a variety of time series.

- It is flexible and can be adapted to different scenarios.

- Sales: The optimized Theta model can be used to forecast sales of products or services. This can help companies make decisions about production, inventory, and marketing.

- Production: The optimized Theta model can be used to forecast the production of goods or services. This can help companies ensure they have the capacity to meet demand and avoid overproduction.

- Prices: The optimized Theta model can be used to forecast the prices of goods or services. This can help companies make decisions about pricing and marketing strategy.

- Weather: The optimized Theta model can be used to forecast the weather. This can help companies make decisions about agricultural production, travel planning and risk management.

- Other: The optimized Theta model can also be used to forecast other types of time series, including traffic, energy demand, and population.

Optimized Theta Model (OTM)

Assume that either the time series is non-seasonal or it has been seasonally adjusted using the multiplicative classical decomposition approach. Let be the linear combination of two theta lines, where is the weight parameter. Assuming that and , the weight can be derived as It is straightforward to see from Eqs. (1), (2) that i.e., the weights are calculated properly in such a way that Eq. (1) reproduces the original series. Theorem 1: Let and . We will prove that- the linear system given by for all , where is given by Eq.(4), has the single solution

- the error of choosing a non-optimal weight is proportional to the error for a simple linear regression model.

More on Optimised Theta models

Let and be fixed coefficients for all so that Eqs. (3), (4) configure the state space model given by with parameters , and . The parameter is to be estimated along with and We call this the optimised Theta model (OTM). The -step-ahead forecast at origin is given by which is equivalent to Eq. (3). The conditional variance can be computed easily from the state space model. Thus, the prediction interval for is given by For OTM reproduces the forecasts of the STheta method; hereafter, we will refer to this particular case as the standard Theta model (STM). Theorem 2: The SES-d model, where and is equivalent to where and , if In Theorem 2, we show that OTM is mathematically equivalent to the SES-d model. As a corollary of Theorem 2, STM is mathematically equivalent to SES-d with . Therefore, for the corollary also re-confirms the H&B result on the relationship between STheta and the SES-d model.Loading libraries and data

Tip Statsforecast will be needed. To install, see instructions.Next, we import plotting libraries and configure the plotting style.

Read Data

| month | production | |

|---|---|---|

| 0 | 1962-01-01 | 589 |

| 1 | 1962-02-01 | 561 |

| 2 | 1962-03-01 | 640 |

| 3 | 1962-04-01 | 656 |

| 4 | 1962-05-01 | 727 |

-

The

unique_id(string, int or category) represents an identifier for the series. -

The

ds(datestamp) column should be of a format expected by Pandas, ideally YYYY-MM-DD for a date or YYYY-MM-DD HH:MM:SS for a timestamp. -

The

y(numeric) represents the measurement we wish to forecast.

| ds | y | unique_id | |

|---|---|---|---|

| 0 | 1962-01-01 | 589 | 1 |

| 1 | 1962-02-01 | 561 | 1 |

| 2 | 1962-03-01 | 640 | 1 |

| 3 | 1962-04-01 | 656 | 1 |

| 4 | 1962-05-01 | 727 | 1 |

(ds) is in an object format, we need

to convert to a date format

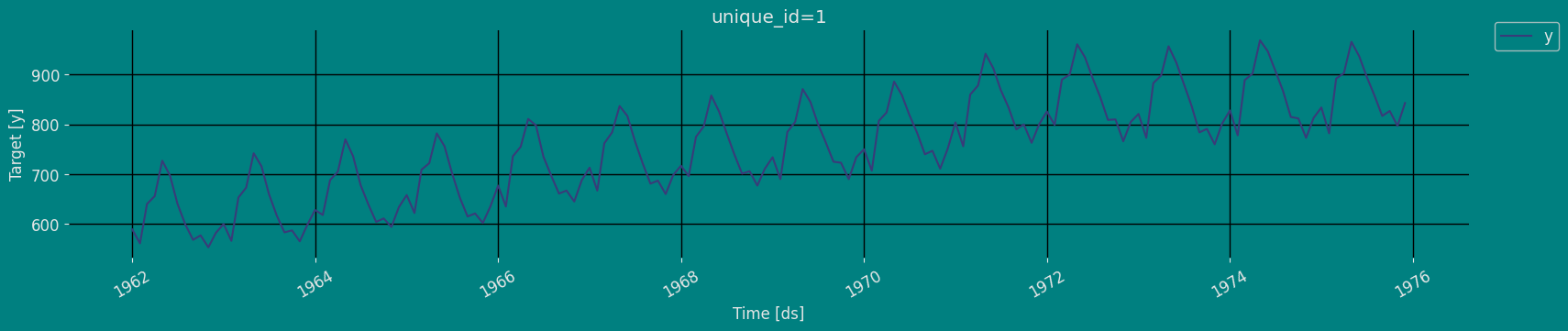

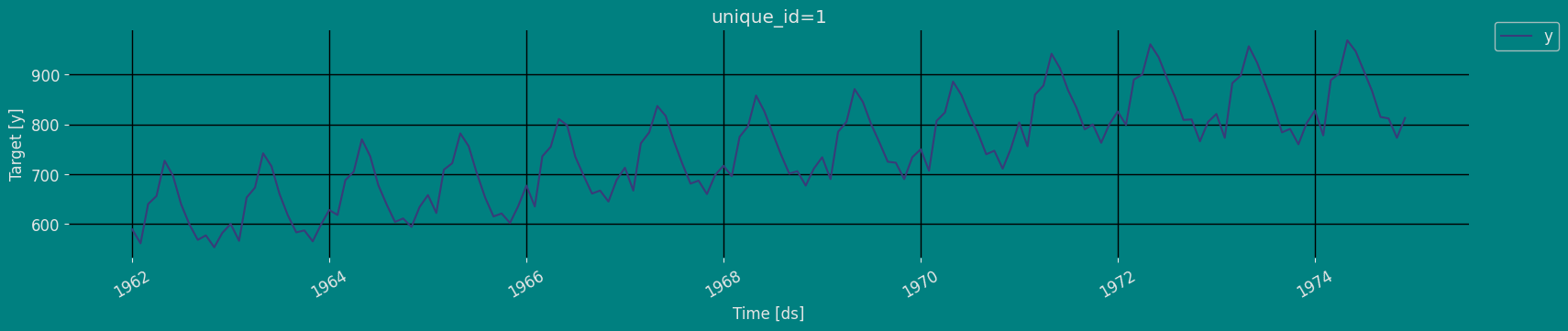

Explore Data with the plot method

Plot some series using the plot method from the StatsForecast class. This method prints a random series from the dataset and is useful for basic EDA.

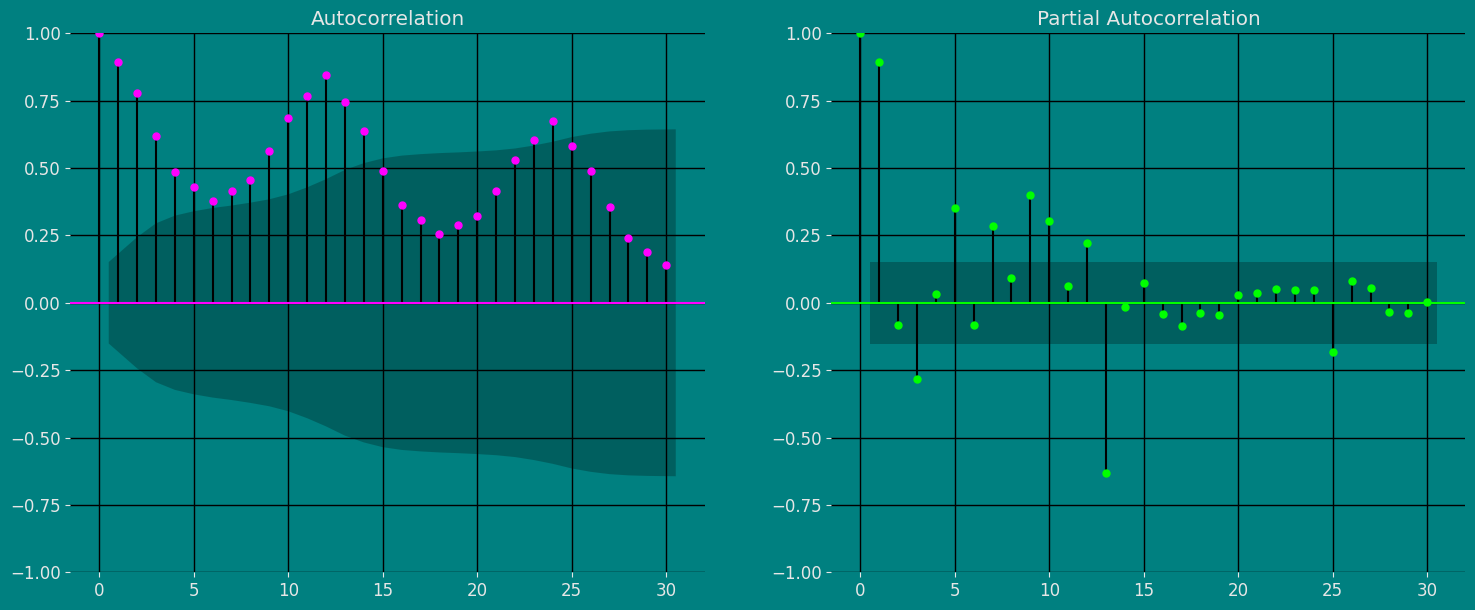

Autocorrelation plots

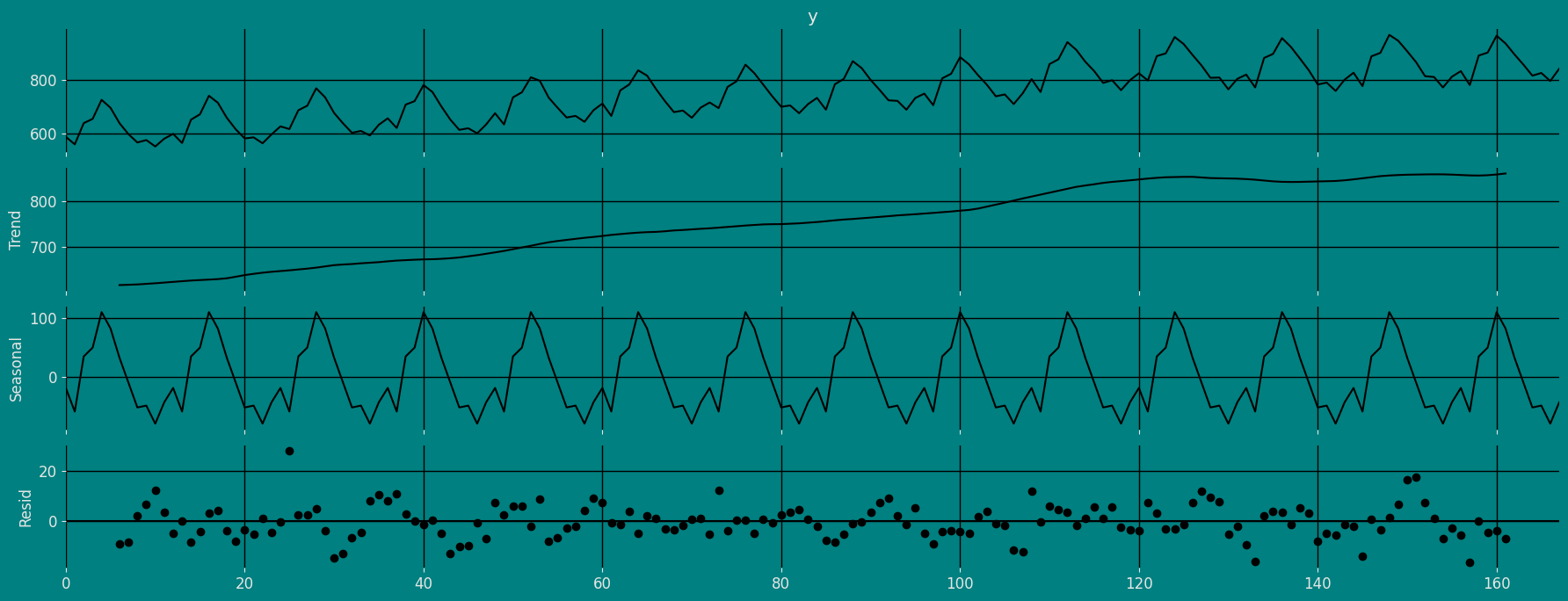

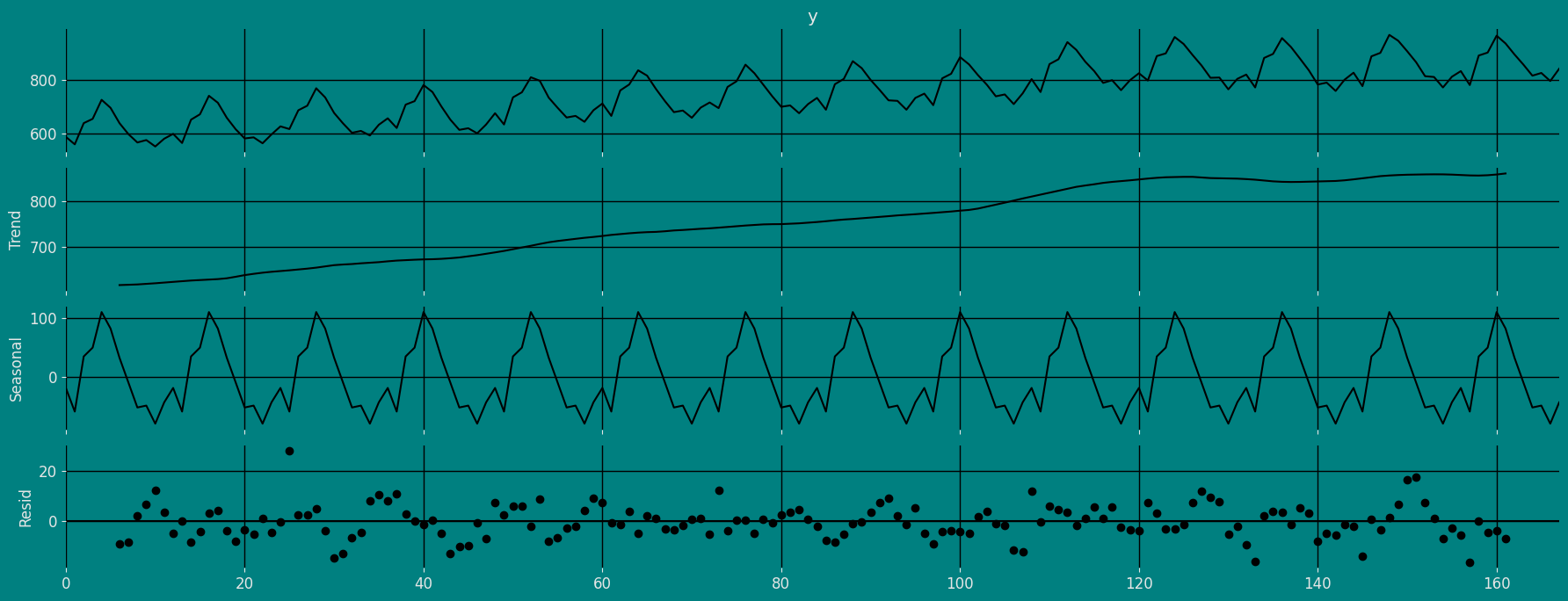

Decomposition of the time series

How to decompose a time series and why? In time series analysis to forecast new values, it is very important to know past data. More formally, we can say that it is very important to know the patterns that values follow over time. There can be many reasons that cause our forecast values to fall in the wrong direction. Basically, a time series consists of four components. The variation of those components causes the change in the pattern of the time series. These components are:- Level: This is the primary value that averages over time.

- Trend: The trend is the value that causes increasing or decreasing patterns in a time series.

- Seasonality: This is a cyclical event that occurs in a time series for a short time and causes short-term increasing or decreasing patterns in a time series.

- Residual/Noise: These are the random variations in the time series.

Additive time series

If the components of the time series are added to make the time series. Then the time series is called the additive time series. By visualization, we can say that the time series is additive if the increasing or decreasing pattern of the time series is similar throughout the series. The mathematical function of any additive time series can be represented by:Multiplicative time series

If the components of the time series are multiplicative together, then the time series is called a multiplicative time series. For visualization, if the time series is having exponential growth or decline with time, then the time series can be considered as the multiplicative time series. The mathematical function of the multiplicative time series can be represented as.Additive

Multiplicative

Split the data into training and testing

Let’s divide our data into sets 1. Data to train ourOptimized Theta model. 2. Data to test our model

For the test data we will use the last 12 months to test and evaluate

the performance of our model.

Implementation of OptimizedTheta with StatsForecast

Load libraries

Instantiating Model

Import and instantiate the models. Setting the argument is sometimes tricky. This article on Seasonal periods by the master, Rob Hyndmann, can be useful forseason_length.

-

freq:a string indicating the frequency of the data. (See pandas’ available frequencies.) -

n_jobs:n_jobs: int, number of jobs used in the parallel processing, use -1 for all cores. -

fallback_model:a model to be used if a model fails.

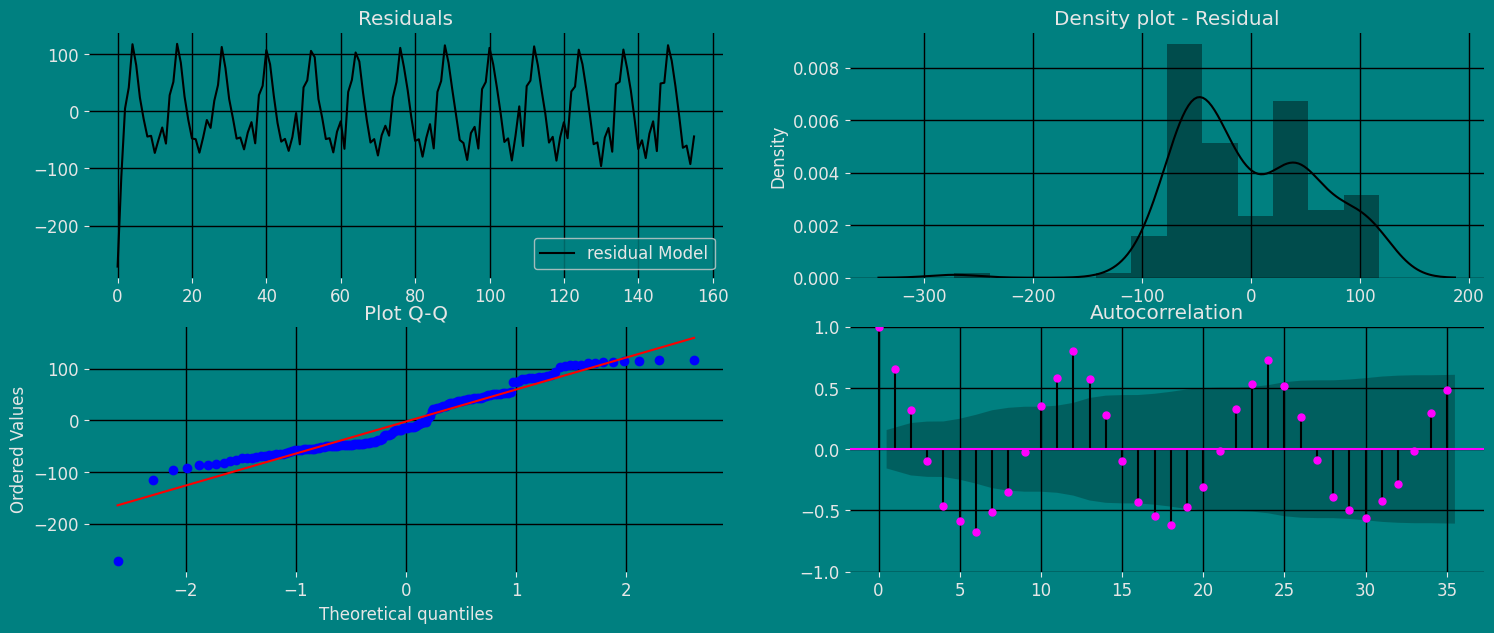

Fit the Model

Optimized Theta Model (OTM). We can

observe it with the following instruction:

.get() function to extract the element and then we are going to save

it in a pd.DataFrame().

| residual Model | |

|---|---|

| 0 | -271.899414 |

| 1 | -114.671692 |

| 2 | 4.768066 |

| … | … |

| 153 | -60.233887 |

| 154 | -92.472839 |

| 155 | -44.143982 |

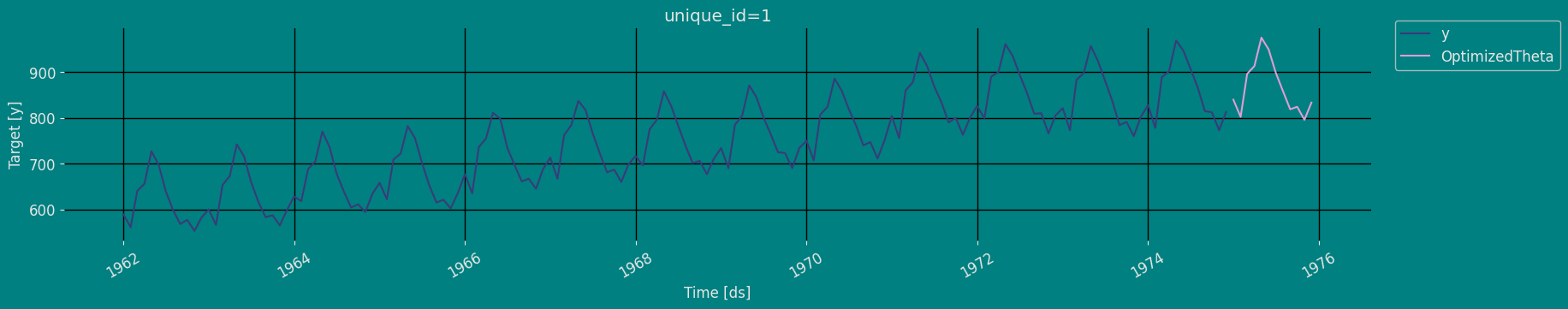

Forecast Method

If you want to gain speed in productive settings where you have multiple series or models we recommend using theStatsForecast.forecast method

instead of .fit and .predict.

The main difference is that the .forecast doest not store the fitted

values and is highly scalable in distributed environments.

The forecast method takes two arguments: forecasts next h (horizon)

and level.

-

h (int):represents the forecast h steps into the future. In this case, 12 months ahead. -

level (list of floats):this optional parameter is used for probabilistic forecasting. Set the level (or confidence percentile) of your prediction interval. For example,level=[90]means that the model expects the real value to be inside that interval 90% of the times.

ARIMA and Theta)

| unique_id | ds | OptimizedTheta | |

|---|---|---|---|

| 0 | 1 | 1975-01-01 | 839.682800 |

| 1 | 1 | 1975-02-01 | 802.071838 |

| 2 | 1 | 1975-03-01 | 896.117126 |

| … | … | … | … |

| 9 | 1 | 1975-10-01 | 824.135498 |

| 10 | 1 | 1975-11-01 | 795.691223 |

| 11 | 1 | 1975-12-01 | 833.316345 |

| unique_id | ds | y | OptimizedTheta | |

|---|---|---|---|---|

| 0 | 1 | 1962-01-01 | 589.0 | 860.899414 |

| 1 | 1 | 1962-02-01 | 561.0 | 675.671692 |

| 2 | 1 | 1962-03-01 | 640.0 | 635.231934 |

| 3 | 1 | 1962-04-01 | 656.0 | 614.731323 |

| 4 | 1 | 1962-05-01 | 727.0 | 609.770752 |

| unique_id | ds | OptimizedTheta | OptimizedTheta-lo-95 | OptimizedTheta-hi-95 | |

|---|---|---|---|---|---|

| 0 | 1 | 1975-01-01 | 839.682800 | 742.509583 | 955.414307 |

| 1 | 1 | 1975-02-01 | 802.071838 | 643.581360 | 945.119202 |

| 2 | 1 | 1975-03-01 | 896.117126 | 710.785095 | 1065.057495 |

| … | … | … | … | … | … |

| 9 | 1 | 1975-10-01 | 824.135498 | 555.948669 | 1084.320190 |

| 10 | 1 | 1975-11-01 | 795.691223 | 503.147858 | 1036.519531 |

| 11 | 1 | 1975-12-01 | 833.316345 | 530.259705 | 1106.636597 |

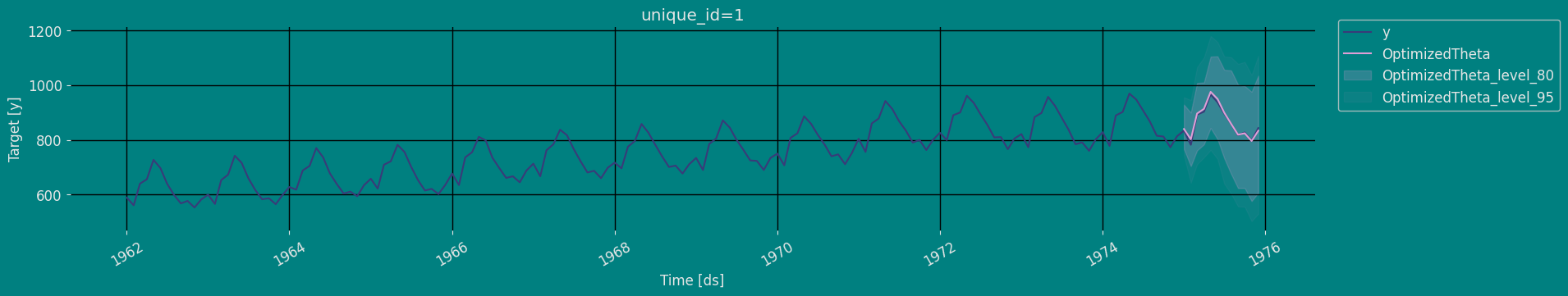

Predict method with confidence interval

To generate forecasts use the predict method. The predict method takes two arguments: forecasts the nexth (for

horizon) and level.

-

h (int):represents the forecast h steps into the future. In this case, 12 months ahead. -

level (list of floats):this optional parameter is used for probabilistic forecasting. Set the level (or confidence percentile) of your prediction interval. For example,level=[95]means that the model expects the real value to be inside that interval 95% of the times.

| unique_id | ds | OptimizedTheta | |

|---|---|---|---|

| 0 | 1 | 1975-01-01 | 839.682800 |

| 1 | 1 | 1975-02-01 | 802.071838 |

| 2 | 1 | 1975-03-01 | 896.117126 |

| … | … | … | … |

| 9 | 1 | 1975-10-01 | 824.135498 |

| 10 | 1 | 1975-11-01 | 795.691223 |

| 11 | 1 | 1975-12-01 | 833.316345 |

| unique_id | ds | OptimizedTheta | OptimizedTheta-lo-80 | OptimizedTheta-hi-80 | OptimizedTheta-lo-95 | OptimizedTheta-hi-95 | |

|---|---|---|---|---|---|---|---|

| 0 | 1 | 1975-01-01 | 839.682800 | 766.665955 | 928.326172 | 742.509583 | 955.414307 |

| 1 | 1 | 1975-02-01 | 802.071838 | 704.290039 | 899.335815 | 643.581360 | 945.119202 |

| 2 | 1 | 1975-03-01 | 896.117126 | 761.334778 | 1007.408447 | 710.785095 | 1065.057495 |

| … | … | … | … | … | … | … | … |

| 9 | 1 | 1975-10-01 | 824.135498 | 623.903992 | 996.567200 | 555.948669 | 1084.320190 |

| 10 | 1 | 1975-11-01 | 795.691223 | 576.546570 | 975.490784 | 503.147858 | 1036.519531 |

| 11 | 1 | 1975-12-01 | 833.316345 | 606.713623 | 1033.885742 | 530.259705 | 1106.636597 |

Cross-validation

In previous steps, we’ve taken our historical data to predict the future. However, to asses its accuracy we would also like to know how the model would have performed in the past. To assess the accuracy and robustness of your models on your data perform Cross-Validation. With time series data, Cross Validation is done by defining a sliding window across the historical data and predicting the period following it. This form of cross-validation allows us to arrive at a better estimation of our model’s predictive abilities across a wider range of temporal instances while also keeping the data in the training set contiguous as is required by our models. The following graph depicts such a Cross Validation Strategy:

Perform time series cross-validation

Cross-validation of time series models is considered a best practice but most implementations are very slow. The statsforecast library implements cross-validation as a distributed operation, making the process less time-consuming to perform. If you have big datasets you can also perform Cross Validation in a distributed cluster using Ray, Dask or Spark. In this case, we want to evaluate the performance of each model for the last 5 months(n_windows=5), forecasting every second months

(step_size=12). Depending on your computer, this step should take

around 1 min.

The cross_validation method from the StatsForecast class takes the

following arguments.

-

df:training data frame -

h (int):represents h steps into the future that are being forecasted. In this case, 12 months ahead. -

step_size (int):step size between each window. In other words: how often do you want to run the forecasting processes. -

n_windows(int):number of windows used for cross validation. In other words: what number of forecasting processes in the past do you want to evaluate.

unique_id:index. If you dont like working with index just run crossvalidation_df.resetindex()ds:datestamp or temporal indexcutoff:the last datestamp or temporal index for the n_windows.y:true value"model":columns with the model’s name and fitted value.

| unique_id | ds | cutoff | y | OptimizedTheta | |

|---|---|---|---|---|---|

| 0 | 1 | 1972-01-01 | 1971-12-01 | 826.0 | 828.836365 |

| 1 | 1 | 1972-02-01 | 1971-12-01 | 799.0 | 792.592346 |

| 2 | 1 | 1972-03-01 | 1971-12-01 | 890.0 | 883.269592 |

| … | … | … | … | … | … |

| 33 | 1 | 1974-10-01 | 1973-12-01 | 812.0 | 812.183838 |

| 34 | 1 | 1974-11-01 | 1973-12-01 | 773.0 | 783.898376 |

| 35 | 1 | 1974-12-01 | 1973-12-01 | 813.0 | 821.124329 |

Model Evaluation

Now we are going to evaluate our model with the results of the predictions, we will use different types of metrics MAE, MAPE, MASE, RMSE, SMAPE to evaluate the accuracy.| unique_id | metric | OptimizedTheta | |

|---|---|---|---|

| 0 | 1 | mae | 6.740204 |

| 1 | 1 | mape | 0.007828 |

| 2 | 1 | mase | 0.303120 |

| 3 | 1 | rmse | 8.701501 |

| 4 | 1 | smape | 0.003893 |

References

- Kostas I. Nikolopoulos, Dimitrios D. Thomakos. Forecasting with the Theta Method-Theory and Applications. 2019 John Wiley & Sons Ltd.

- Jose A. Fiorucci, Tiago R. Pellegrini, Francisco Louzada, Fotios Petropoulos, Anne B. Koehler (2016). “Models for optimising the theta method and their relationship to state space models”. International Journal of Forecasting.

- Nixtla OptimizedTheta API

- Pandas available frequencies.

- Rob J. Hyndman and George Athanasopoulos (2018). “Forecasting Principles and Practice (3rd ed)”.

- Seasonal periods- Rob J Hyndman.