Documentation Index

Fetch the complete documentation index at: https://nixtlaverse.nixtla.io/llms.txt

Use this file to discover all available pages before exploring further.

Step-by-step guide on using theDuring this walkthrough, we will become familiar with the mainHolt Winters ModelwithStatsforecast.

StatsForecast class and some relevant methods such as

StatsForecast.plot, StatsForecast.forecast and

StatsForecast.cross_validation in other.

The text in this article is largely taken from: 1. Changquan Huang •

Alla Petukhina. Springer series (2022). Applied Time Series Analysis and

Forecasting with

Python. 2.

Ivan Svetunkov. Forecasting and Analytics with the Augmented Dynamic

Adaptive Model (ADAM) 3. James D.

Hamilton. Time Series Analysis Princeton University Press, Princeton,

New Jersey, 1st Edition,

1994.

4. Rob J. Hyndman and George Athanasopoulos (2018). “Forecasting

Principles and Practice (3rd ed)”.

Table of Contents

- Introduction

- Holt-Winters Model

- Loading libraries and data

- Explore data with the plot method

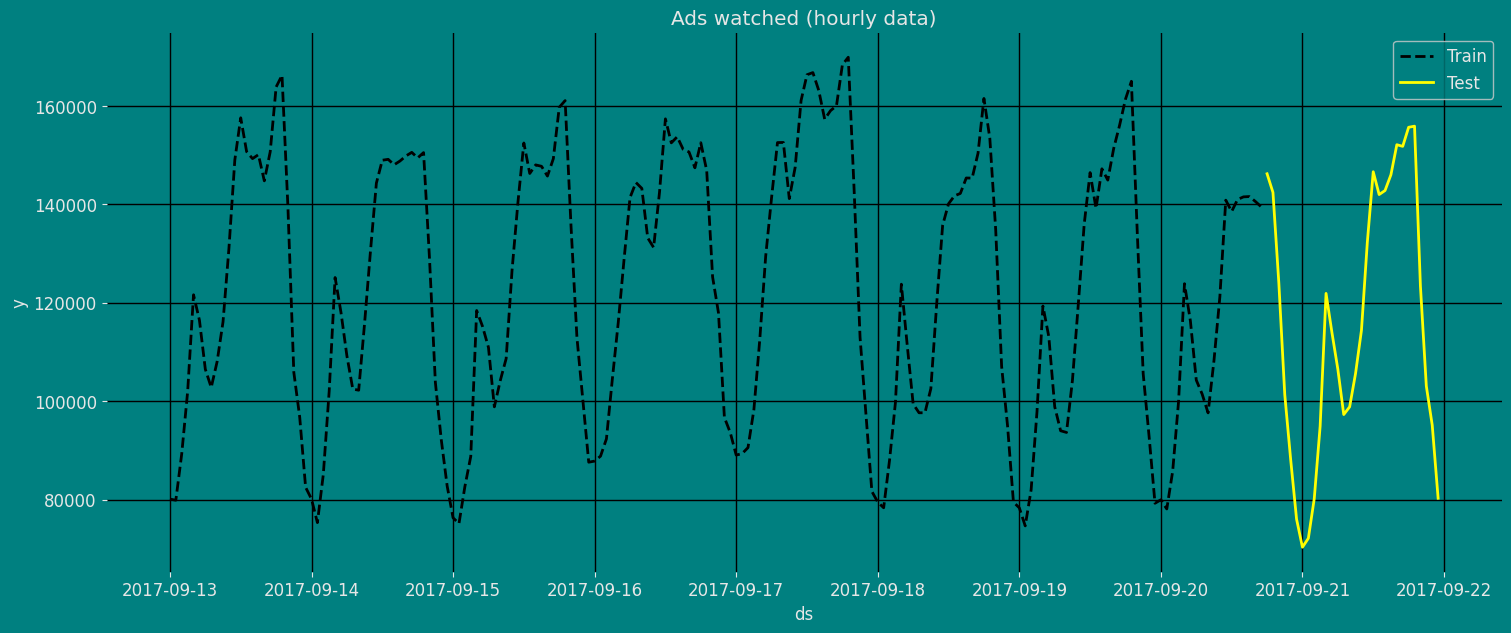

- Split the data into training and testing

- Implementation of Holt-Winters with StatsForecast

- Cross-validation

- Model evaluation

- References

Introduction

The Holt-Winter model, also known as the triple exponential smoothing method, is a forecasting technique widely used in time series analysis. It was developed by Charles Holt and Peter Winters in 1960 as an improvement on Holt’s double exponential smoothing method. The Holt-Winter model is used to predict future values of a time series that exhibits a trend and seasonality. The model uses three smoothing parameters, one for estimating the trend, another for estimating the level or base level of the time series, and another for estimating seasonality. These parameters are called α, β and γ, respectively. The Holt-Winter model is an extension of Holt’s double exponential smoothing method, which uses only two smoothing parameters to estimate the trend and base level of the time series. The Holt-Winter model improves the accuracy of the forecasts by adding a third smoothing parameter for seasonality. One of the main advantages of the Holt-Winter model is that it is easy to implement and does not require a large amount of historical data to generate accurate predictions. Furthermore, the model is highly adaptable and can be customized to fit a wide variety of time series with seasonality. However, the Holt-Winter model has some limitations. For example, the model assumes that the time series is stationary and that seasonality is constant. If the time series is not stationary or has non-constant seasonality, the Holt-Winter model may not be the most appropriate. In general, the Holt-Winter model is a useful and widely used technique in time series analysis, especially when the series is expected to exhibit a constant trend and seasonality.Holt-Winters Method

The Holt-Winters seasonal method comprises the forecast equation and three smoothing equations — one for the level , one for the trend , and one for the seasonal component , with corresponding smoothing parameters , and . We use to denote the period of the seasonality, i.e., the number of seasons in a year. For example, for quarterly data , and for monthly data . There are two variations to this method that differ in the nature of the seasonal component. The additive method is preferred when the seasonal variations are roughly constant through the series, while the multiplicative method is preferred when the seasonal variations are changing proportional to the level of the series. With the additive method, the seasonal component is expressed in absolute terms in the scale of the observed series, and in the level equation the series is seasonally adjusted by subtracting the seasonal component. Within each year, the seasonal component will add up to approximately zero. With the multiplicative method, the seasonal component is expressed in relative terms (percentages), and the series is seasonally adjusted by dividing through by the seasonal component. Within each year, the seasonal component will sum up to approximately .Holt-Winters’ additive method

Holt-Winters’ additive method is a time series forecasting technique that extends the Holt-Winters’ method by incorporating an additive seasonality component. It is suitable for time series data that exhibit a seasonal pattern that changes over time. The Holt-Winters’ additive method uses three smoothing parameters - alpha (α), beta (β), and gamma (γ) - to estimate the level, trend, and seasonal components of the time series. The alpha parameter controls the smoothing of the level component, the beta parameter controls the smoothing of the trend component, and the gamma parameter controls the smoothing of the additive seasonal component. The forecasting process involves three steps: first, the level, trend, and seasonal components are estimated using the smoothing parameters and the historical data; second, these components are used to forecast future values of the time series; and third, the forecasted values are adjusted for the seasonal component using an additive factor. One of the advantages of Holt-Winters’ additive method is that it can handle time series data with an additive seasonality component, which is common in many real-world applications. The method is also easy to implement and can be extended to handle time series data with changing seasonal patterns. However, the method has some limitations. It assumes that the seasonality pattern is additive, which may not be the case for all time series. Additionally, the method requires a sufficient amount of historical data to accurately estimate the smoothing parameters and the seasonal component. Overall, Holt-Winters’ additive method is a powerful and widely used forecasting technique that can be used to generate accurate predictions for time series data with an additive seasonality component. The method is easy to implement and can be extended to handle time series data with changing seasonal patterns. The component form for the additive method is: where is the integer part of , which ensures that the estimates of the seasonal indices used for forecasting come from the final year of the sample. The level equation shows a weighted average between the seasonally adjusted observation and the non-seasonal forecast for time . The trend equation is identical to Holt’s linear method. The seasonal equation shows a weighted average between the current seasonal index, , and the seasonal index of the same season last year (i.e., time periods ago). The equation for the seasonal component is often expressed as If we substitute from the smoothing equation for the level of the component form above, we get which is identical to the smoothing equation for the seasonal component we specify here, with . The usual parameter restriction is , which translates to .Holt-Winters’ multiplicative method

The Holt-Winters’ multiplicative method uses three smoothing parameters - alpha (α), beta (β), and gamma (γ) - to estimate the level, trend, and seasonal components of the time series. The alpha parameter controls the smoothing of the level component, the beta parameter controls the smoothing of the trend component, and the gamma parameter controls the smoothing of the multiplicative seasonal component. The forecasting process involves three steps: first, the level, trend, and seasonal components are estimated using the smoothing parameters and the historical data; second, these components are used to forecast future values of the time series; and third, the forecasted values are adjusted for the seasonal component using a multiplicative factor. One of the advantages of Holt-Winters’ multiplicative method is that it can handle time series data with a multiplicative seasonality component, which is common in many real-world applications. The method is also easy to implement and can be extended to handle time series data with changing seasonal patterns. However, the method has some limitations. It assumes that the seasonality pattern is multiplicative, which may not be the case for all time series. Additionally, the method requires a sufficient amount of historical data to accurately estimate the smoothing parameters and the seasonal component. Overall, Holt-Winters’ multiplicative method is a powerful and widely used forecasting technique that can be used to generate accurate predictions for time series data with a multiplicative seasonality component. The method is easy to implement and can be extended to handle time series data with changing seasonal patterns. In the multiplicative version, the seasonality averages to one. Use the multiplicative method if the seasonal variation increases with the level.Mathematical models in the ETS taxonomy

I hope that it becomes more apparent to the reader how the ETS framework is built upon the idea of time series decomposition. By introducing different components, defining their types, and adding the equations for their update, we can construct models that would work better in capturing the key features of the time series. But we should also consider the potential change in components over time. The “transition” or “state” equations are supposed to reflect this change: they explain how the level, trend or seasonal components evolve. As discussed in Section 2.2, given different types of components and their interactions, we end up with 30 models in the taxonomy. Tables 1 and 2 summarise mathematically all 30 ETS models shown graphically on Figures 1 and 2, presenting formulae for measurement and transition equations. Table 1: Additive error ETS models| Nonseasonal | Additive | Multiplicative | |

|---|---|---|---|

| No trend | |||

| Additive | |||

| Additive damped | |||

| Multiplicative | |||

| Multiplicative damped |

| Nonseasonal | Additive | Multiplicative | |

|---|---|---|---|

| No trend | |||

| Additive | |||

| Additive damped | |||

| Multiplicative | |||

| Multiplicative damped |

Model selection

A great advantage of theHolt Winters statistical framework is that

information criteria can be used for model selection. The AIC, AIC_c

and BIC, can be used here to determine which of the Holt Winters

models is most appropriate for a given time series.

For Holt Winters models, Akaike’s Information Criterion (AIC) is

defined as

where is the likelihood of the model and is the total number of

parameters and initial states that have been estimated (including the

residual variance).

The AIC corrected for small sample bias (AIC_c) is defined as

and the Bayesian Information Criterion (BIC) is

Three of the combinations of (Error, Trend, Seasonal) can lead to

numerical difficulties. Specifically, the models that can cause such

instabilities are ETS(A,N,M), ETS(A,A,M), and ETS(A,Ad,M), due to

division by values potentially close to zero in the state equations. We

normally do not consider these particular combinations when selecting a

model.

Models with multiplicative errors are useful when the data are strictly

positive, but are not numerically stable when the data contain zeros or

negative values. Therefore, multiplicative error models will not be

considered if the time series is not strictly positive. In that case,

only the six fully additive models will be applied.

Loading libraries and data

Tip Statsforecast will be needed. To install, see instructions.Next, we import plotting libraries and configure the plotting style.

Read Data

| Time | Ads | |

|---|---|---|

| 0 | 2017-09-13T00:00:00 | 80115 |

| 1 | 2017-09-13T01:00:00 | 79885 |

| 2 | 2017-09-13T02:00:00 | 89325 |

| 3 | 2017-09-13T03:00:00 | 101930 |

| 4 | 2017-09-13T04:00:00 | 121630 |

-

The

unique_id(string, int or category) represents an identifier for the series. -

The

ds(datestamp) column should be of a format expected by Pandas, ideally YYYY-MM-DD for a date or YYYY-MM-DD HH:MM:SS for a timestamp. -

The

y(numeric) represents the measurement we wish to forecast.

| ds | y | unique_id | |

|---|---|---|---|

| 0 | 2017-09-13T00:00:00 | 80115 | 1 |

| 1 | 2017-09-13T01:00:00 | 79885 | 1 |

| 2 | 2017-09-13T02:00:00 | 89325 | 1 |

| 3 | 2017-09-13T03:00:00 | 101930 | 1 |

| 4 | 2017-09-13T04:00:00 | 121630 | 1 |

(ds) is in an object format, we need

to convert to a date format

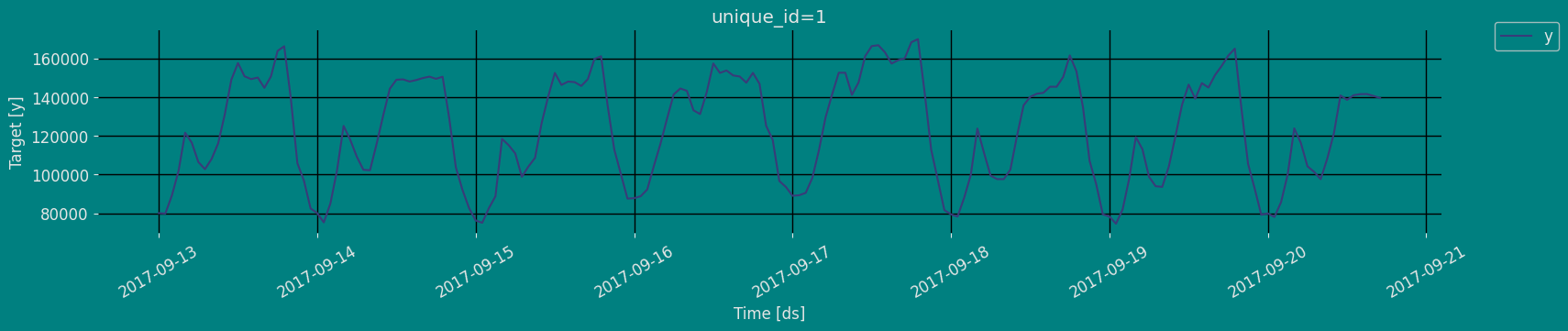

Explore Data with the plot method

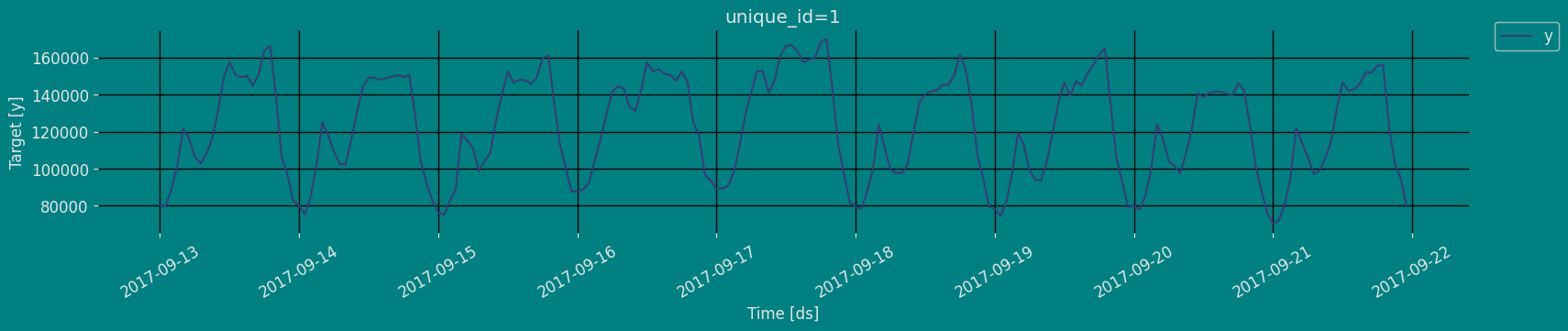

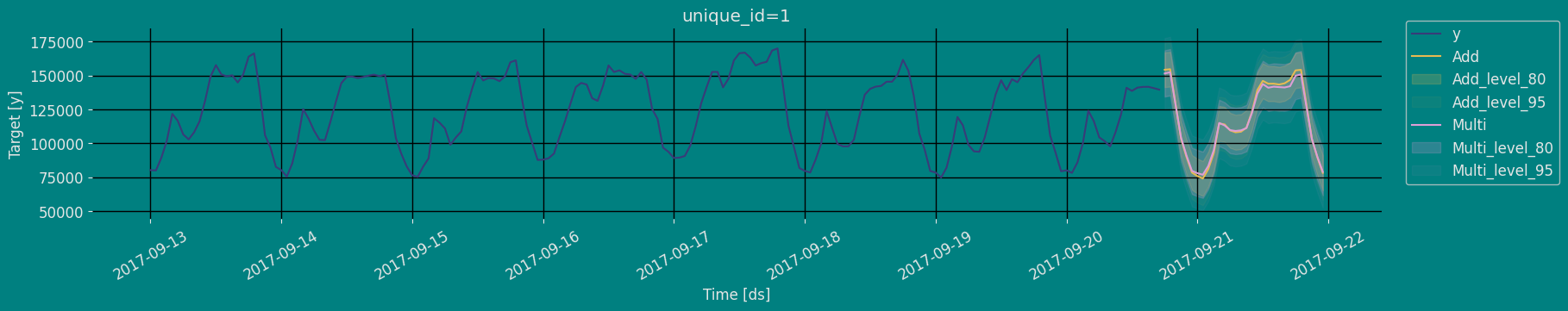

Plot some series using the plot method from the StatsForecast class. This method prints a random series from the dataset and is useful for basic EDA.

The Augmented Dickey-Fuller Test

An Augmented Dickey-Fuller (ADF) test is a type of statistical test that determines whether a unit root is present in time series data. Unit roots can cause unpredictable results in time series analysis. A null hypothesis is formed in the unit root test to determine how strongly time series data is affected by a trend. By accepting the null hypothesis, we accept the evidence that the time series data is not stationary. By rejecting the null hypothesis or accepting the alternative hypothesis, we accept the evidence that the time series data is generated by a stationary process. This process is also known as stationary trend. The values of the ADF test statistic are negative. Lower ADF values indicate a stronger rejection of the null hypothesis. Augmented Dickey-Fuller Test is a common statistical test used to test whether a given time series is stationary or not. We can achieve this by defining the null and alternate hypothesis.- Null Hypothesis: Time Series is non-stationary. It gives a time-dependent trend.

- Alternate Hypothesis: Time Series is stationary. In another term, the series doesn’t depend on time.

- ADF or t Statistic < critical values: Reject the null hypothesis, time series is stationary.

- ADF or t Statistic > critical values: Failed to reject the null hypothesis, time series is non-stationary.

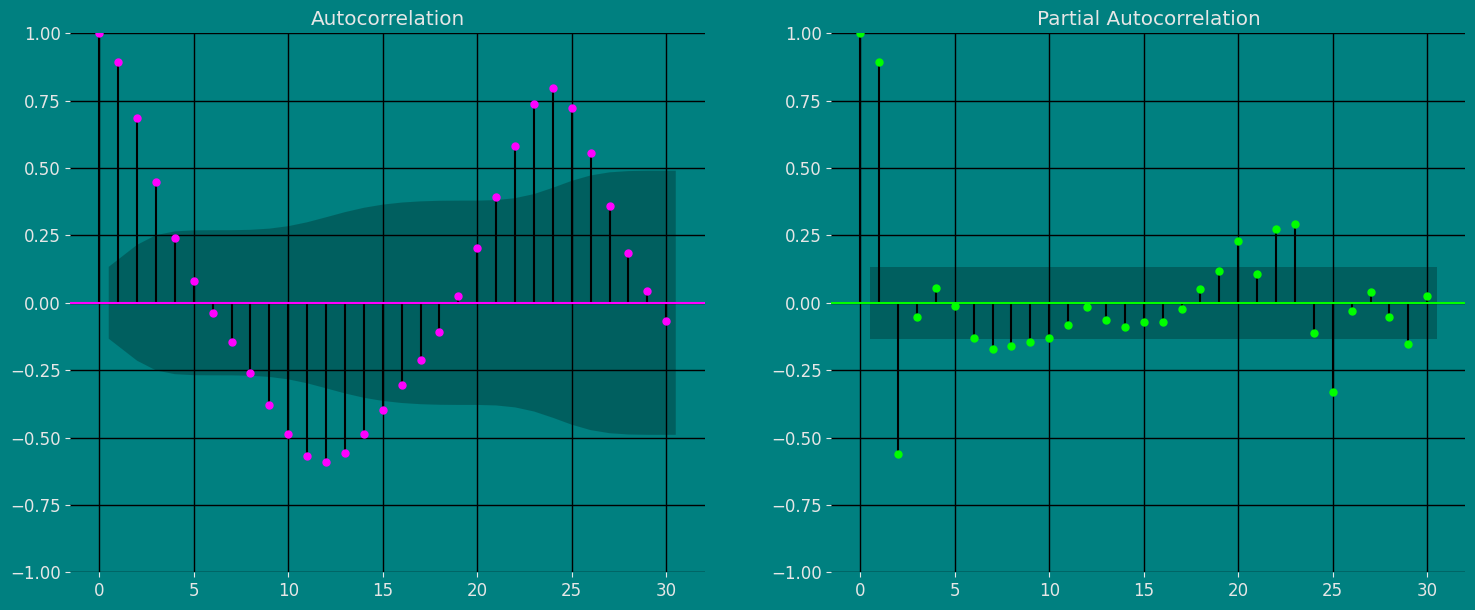

Autocorrelation plots

Autocorrelation Function Definition 1. Let be a time series sample of size n from . 1. is called the sample mean of . 2. is known as the sample autocovariance function of . 3. is said to be the sample autocorrelation function of . Note the following remarks about this definition:- Like most literature, this guide uses ACF to denote the sample autocorrelation function as well as the autocorrelation function. What is denoted by ACF can easily be identified in context.

- Clearly c0 is the sample variance of . Besides, and for any integer .

- When we compute the ACF of any sample series with a fixed length , we cannot put too much confidence in the values of for large k’s, since fewer pairs of are available for calculating as is large. One rule of thumb is not to estimate for , and another is . In any case, it is always a good idea to be careful.

- We also compute the ACF of a nonstationary time series sample by Definition 1. In this case, however, the ACF or very slowly or hardly tapers off as increases.

- Plotting the ACF against lag is easy but very helpful in analyzing time series sample. Such an ACF plot is known as a correlogram.

- If is stationary with and for all ,thatis,itisa white noise series, then the sampling distribution of is asymptotically normal with the mean 0 and the variance of . Hence, there is about 95% chance that falls in the interval .

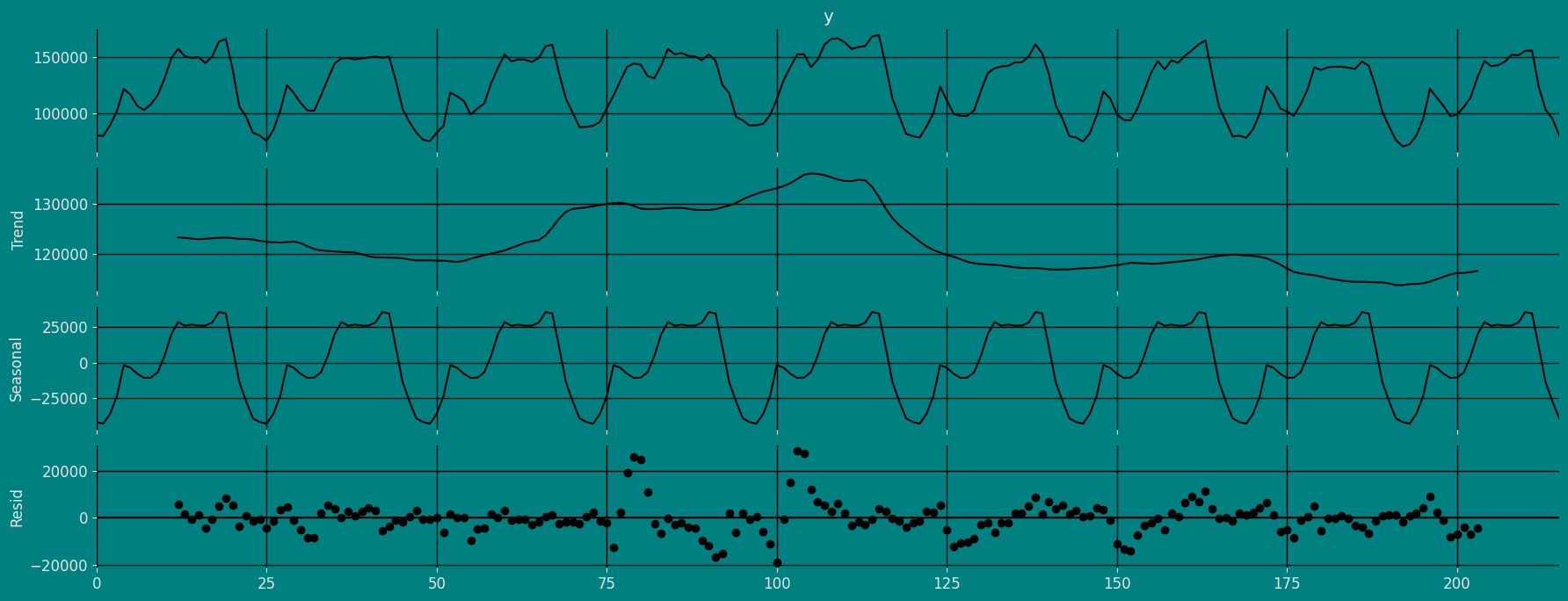

Decomposition of the time series

How to decompose a time series and why? In time series analysis to forecast new values, it is very important to know past data. More formally, we can say that it is very important to know the patterns that values follow over time. There can be many reasons that cause our forecast values to fall in the wrong direction. Basically, a time series consists of four components. The variation of those components causes the change in the pattern of the time series. These components are:- Level: This is the primary value that averages over time.

- Trend: The trend is the value that causes increasing or decreasing patterns in a time series.

- Seasonality: This is a cyclical event that occurs in a time series for a short time and causes short-term increasing or decreasing patterns in a time series.

- Residual/Noise: These are the random variations in the time series.

Additive time series

If the components of the time series are added to make the time series. Then the time series is called the additive time series. By visualization, we can say that the time series is additive if the increasing or decreasing pattern of the time series is similar throughout the series. The mathematical function of any additive time series can be represented by:Multiplicative time series

If the components of the time series are multiplicative together, then the time series is called a multiplicative time series. For visualization, if the time series is having exponential growth or decline with time, then the time series can be considered as the multiplicative time series. The mathematical function of the multiplicative time series can be represented as.Additive

Multiplicative

Split the data into training and testing

Let’s divide our data into sets- Data to train our

Holt Winters Model. - Data to test our model

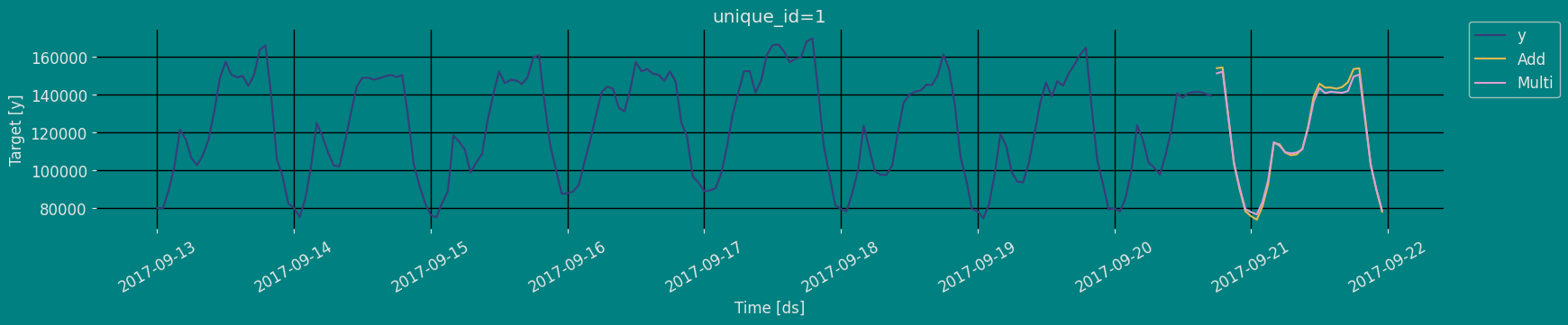

Implementation of Holt-Winters Method with StatsForecast

Load libraries

Instantiating Model

Import and instantiate the models. Setting the argument is sometimes tricky. This article on Seasonal periods by the master, Rob Hyndmann, can be useful forseason_length.

In this case we are going to test two alternatives of the model, one

additive and one multiplicative.

-

freq:a string indicating the frequency of the data. (See panda’s available frequencies.) -

n_jobs:n_jobs: int, number of jobs used in the parallel processing, use -1 for all cores. -

fallback_model:a model to be used if a model fails.

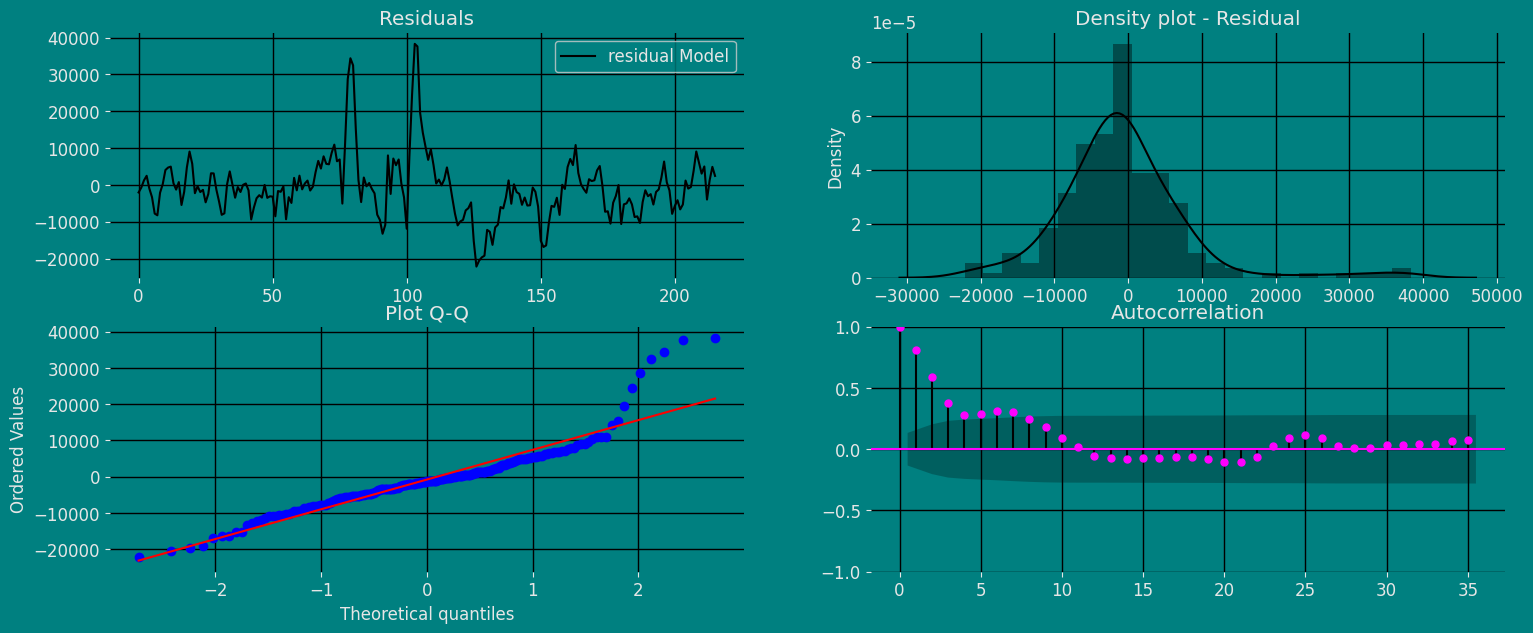

Fit the Model

Holt Winters Model. We can observe it

with the following instruction:

.get() function to extract the element and then we are going to save

it in a pd.DataFrame().

| residual Model | |

|---|---|

| 0 | -1087.029091 |

| 1 | 623.989786 |

| 2 | 3054.101324 |

| … | … |

| 183 | -2783.032921 |

| 184 | -4618.147123 |

| 185 | -8194.063498 |

Forecast Method

If you want to gain speed in productive settings where you have multiple series or models we recommend using theStatsForecast.forecast method

instead of .fit and .predict.

The main difference is that the .forecast doest not store the fitted

values and is highly scalable in distributed environments.

The forecast method takes two arguments: forecasts next h (horizon)

and level.

-

h (int):represents the forecast h steps into the future. In this case, 30 hours ahead. -

level (list of floats):this optional parameter is used for probabilistic forecasting. Set the level (or confidence percentile) of your prediction interval. For example,level=[90]means that the model expects the real value to be inside that interval 90% of the times.

| unique_id | ds | Add | Multi | |

|---|---|---|---|---|

| 0 | 1 | 2017-09-20 18:00:00 | 154164.609375 | 151414.984375 |

| 1 | 1 | 2017-09-20 19:00:00 | 154547.171875 | 152352.640625 |

| 2 | 1 | 2017-09-20 20:00:00 | 128790.359375 | 128274.789062 |

| … | … | … | … | … |

| 27 | 1 | 2017-09-21 21:00:00 | 103021.726562 | 103086.851562 |

| 28 | 1 | 2017-09-21 22:00:00 | 89544.054688 | 90028.406250 |

| 29 | 1 | 2017-09-21 23:00:00 | 78090.210938 | 78823.953125 |

| unique_id | ds | y | Add | Multi | |

|---|---|---|---|---|---|

| 0 | 1 | 2017-09-13 00:00:00 | 80115.0 | 81202.031250 | 79892.687500 |

| 1 | 1 | 2017-09-13 01:00:00 | 79885.0 | 79261.007812 | 78792.476562 |

| 2 | 1 | 2017-09-13 02:00:00 | 89325.0 | 86270.898438 | 85444.117188 |

| 3 | 1 | 2017-09-13 03:00:00 | 101930.0 | 97905.273438 | 97286.796875 |

| 4 | 1 | 2017-09-13 04:00:00 | 121630.0 | 120287.523438 | 118195.570312 |

| unique_id | ds | Add | Add-lo-95 | Add-hi-95 | Multi | Multi-lo-95 | Multi-hi-95 | |

|---|---|---|---|---|---|---|---|---|

| 0 | 1 | 2017-09-20 18:00:00 | 154164.609375 | 134594.859375 | 173734.375000 | 151414.984375 | 125296.867188 | 177533.109375 |

| 1 | 1 | 2017-09-20 19:00:00 | 154547.171875 | 134970.062500 | 174124.265625 | 152352.640625 | 126234.515625 | 178470.765625 |

| 2 | 1 | 2017-09-20 20:00:00 | 128790.359375 | 109205.242188 | 148375.484375 | 128274.789062 | 102156.671875 | 154392.906250 |

| … | … | … | … | … | … | … | … | … |

| 27 | 1 | 2017-09-21 21:00:00 | 103021.726562 | 83118.632812 | 122924.812500 | 103086.851562 | 76659.867188 | 129513.835938 |

| 28 | 1 | 2017-09-21 22:00:00 | 89544.054688 | 69626.210938 | 109461.890625 | 90028.406250 | 63601.425781 | 116455.390625 |

| 29 | 1 | 2017-09-21 23:00:00 | 78090.210938 | 58157.574219 | 98022.843750 | 78823.953125 | 52396.972656 | 105250.937500 |

Predict method with confidence interval

To generate forecasts use the predict method. The predict method takes two arguments: forecasts the nexth (for

horizon) and level.

-

h (int):represents the forecast h steps into the future. In this case, 30 hours ahead. -

level (list of floats):this optional parameter is used for probabilistic forecasting. Set the level (or confidence percentile) of your prediction interval. For example,level=[95]means that the model expects the real value to be inside that interval 95% of the times.

| unique_id | ds | Add | Multi | |

|---|---|---|---|---|

| 0 | 1 | 2017-09-20 18:00:00 | 154164.609375 | 151414.984375 |

| 1 | 1 | 2017-09-20 19:00:00 | 154547.171875 | 152352.640625 |

| 2 | 1 | 2017-09-20 20:00:00 | 128790.359375 | 128274.789062 |

| … | … | … | … | … |

| 27 | 1 | 2017-09-21 21:00:00 | 103021.726562 | 103086.851562 |

| 28 | 1 | 2017-09-21 22:00:00 | 89544.054688 | 90028.406250 |

| 29 | 1 | 2017-09-21 23:00:00 | 78090.210938 | 78823.953125 |

| unique_id | ds | Add | Add-lo-95 | Add-lo-80 | Add-hi-80 | Add-hi-95 | Multi | Multi-lo-95 | Multi-lo-80 | Multi-hi-80 | Multi-hi-95 | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | 1 | 2017-09-20 18:00:00 | 154164.609375 | 134594.859375 | 141368.640625 | 166960.593750 | 173734.375000 | 151414.984375 | 125296.867188 | 134337.265625 | 168492.703125 | 177533.109375 |

| 1 | 1 | 2017-09-20 19:00:00 | 154547.171875 | 134970.062500 | 141746.390625 | 167347.953125 | 174124.265625 | 152352.640625 | 126234.515625 | 135274.921875 | 169430.359375 | 178470.765625 |

| 2 | 1 | 2017-09-20 20:00:00 | 128790.359375 | 109205.242188 | 115984.335938 | 141596.375000 | 148375.484375 | 128274.789062 | 102156.671875 | 111197.070312 | 145352.515625 | 154392.906250 |

| … | … | … | … | … | … | … | … | … | … | … | … | … |

| 27 | 1 | 2017-09-21 21:00:00 | 103021.726562 | 83118.632812 | 90007.796875 | 116035.656250 | 122924.812500 | 103086.851562 | 76659.867188 | 85807.171875 | 120366.523438 | 129513.835938 |

| 28 | 1 | 2017-09-21 22:00:00 | 89544.054688 | 69626.210938 | 76520.476562 | 102567.632812 | 109461.890625 | 90028.406250 | 63601.425781 | 72748.734375 | 107308.085938 | 116455.390625 |

| 29 | 1 | 2017-09-21 23:00:00 | 78090.210938 | 58157.574219 | 65056.960938 | 91123.460938 | 98022.843750 | 78823.953125 | 52396.972656 | 61544.281250 | 96103.632812 | 105250.937500 |

Cross-validation

In previous steps, we’ve taken our historical data to predict the future. However, to asses its accuracy we would also like to know how the model would have performed in the past. To assess the accuracy and robustness of your models on your data perform Cross-Validation. With time series data, Cross Validation is done by defining a sliding window across the historical data and predicting the period following it. This form of cross-validation allows us to arrive at a better estimation of our model’s predictive abilities across a wider range of temporal instances while also keeping the data in the training set contiguous as is required by our models. The following graph depicts such a Cross Validation Strategy:

Perform time series cross-validation

Cross-validation of time series models is considered a best practice but most implementations are very slow. The statsforecast library implements cross-validation as a distributed operation, making the process less time-consuming to perform. If you have big datasets you can also perform Cross Validation in a distributed cluster using Ray, Dask or Spark. In this case, we want to evaluate the performance of each model for the last 5 months(n_windows=), forecasting every second months

(step_size=12). Depending on your computer, this step should take

around 1 min.

The cross_validation method from the StatsForecast class takes the

following arguments.

-

df:training data frame -

h (int):represents h steps into the future that are being forecasted. In this case, 12 months ahead. -

step_size (int):step size between each window. In other words: how often do you want to run the forecasting processes. -

n_windows(int):number of windows used for cross validation. In other words: what number of forecasting processes in the past do you want to evaluate.

unique_id:series identifier.ds:datestamp or temporal indexcutoff:the last datestamp or temporal index for then_windows.y:true valuemodel:columns with the model’s name and fitted value.

| unique_id | ds | cutoff | y | Add | Multi | |

|---|---|---|---|---|---|---|

| 0 | 1 | 2017-09-18 06:00:00 | 2017-09-18 05:00:00 | 99440.0 | 134578.328125 | 133820.109375 |

| 1 | 1 | 2017-09-18 07:00:00 | 2017-09-18 05:00:00 | 97655.0 | 133548.781250 | 133734.000000 |

| 2 | 1 | 2017-09-18 08:00:00 | 2017-09-18 05:00:00 | 97655.0 | 134798.656250 | 135216.046875 |

| … | … | … | … | … | … | … |

| 87 | 1 | 2017-09-21 21:00:00 | 2017-09-20 17:00:00 | 103080.0 | 103021.726562 | 103086.851562 |

| 88 | 1 | 2017-09-21 22:00:00 | 2017-09-20 17:00:00 | 95155.0 | 89544.054688 | 90028.406250 |

| 89 | 1 | 2017-09-21 23:00:00 | 2017-09-20 17:00:00 | 80285.0 | 78090.210938 | 78823.953125 |

Model Evaluation

Now we are going to evaluate our model with the results of the predictions, we will use different types of metrics MAE, MAPE, MASE, RMSE, SMAPE to evaluate the accuracy.| unique_id | metric | Add | Multi | |

|---|---|---|---|---|

| 0 | 1 | mae | 4306.244531 | 4886.992188 |

| 1 | 1 | mape | 0.038087 | 0.043549 |

| 2 | 1 | mase | 0.532045 | 0.603797 |

| 3 | 1 | rmse | 5415.015573 | 5862.473702 |

| 4 | 1 | smape | 0.018708 | 0.021433 |

References

- Changquan Huang • Alla Petukhina. Springer series (2022). Applied Time Series Analysis and Forecasting with Python.

- Ivan Svetunkov. Forecasting and Analytics with the Augmented Dynamic Adaptive Model (ADAM)

- James D. Hamilton. Time Series Analysis Princeton University Press, Princeton, New Jersey, 1st Edition, 1994.

- Nixtla HoltWinters API

- Pandas available frequencies.

- Rob J. Hyndman and George Athanasopoulos (2018). “Forecasting Principles and Practice (3rd ed)”.

- Seasonal periods- Rob J Hyndman.