Documentation Index

Fetch the complete documentation index at: https://nixtlaverse.nixtla.io/llms.txt

Use this file to discover all available pages before exploring further.

Machine Learning forecasting methods are defined by many hyperparameters that

control their behavior, with effects ranging from their speed and memory

requirements to their predictive performance. For a long time, manual

hyperparameter tuning prevailed. This approach is time-consuming, automated

hyperparameter optimization methods have been introduced, proving more

efficient than manual tuning, grid search, and random search.

The

BaseAuto class offers shared API connections to hyperparameter optimization

algorithms like

Optuna,

HyperOpt,

Dragonfly

among others through ray, which gives you access to grid search, bayesian

optimization and other state-of-the-art tools like

hyperband.

Comprehending the impacts of hyperparameters is still a

precious skill, as it can help guide the design of informed hyperparameter

spaces that are faster to explore automatically.

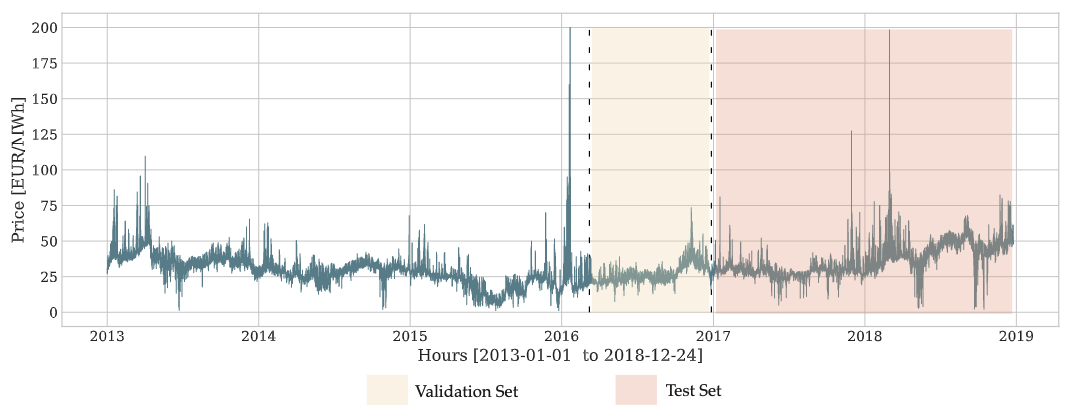

Figure 1. Example of dataset split (left), validation (yellow) and test (orange). The hyperparameter optimization guiding signal is obtained from the validation set.

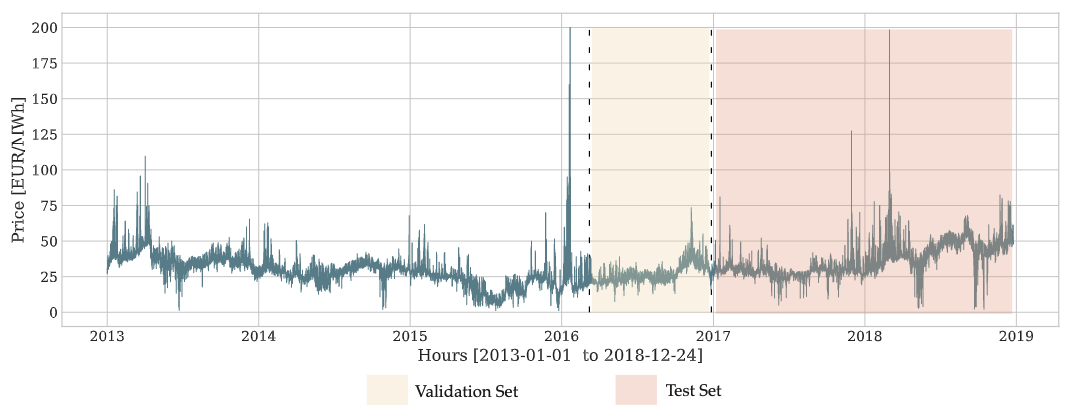

Figure 1. Example of dataset split (left), validation (yellow) and test (orange). The hyperparameter optimization guiding signal is obtained from the validation set.

BaseAuto

BaseAuto(

cls_model,

h,

loss,

valid_loss,

config,

search_alg=BasicVariantGenerator(random_state=1),

num_samples=10,

time_budget=None,

refit_with_val=False,

verbose=False,

alias=None,

backend="ray",

callbacks=None,

ray_options=None,

optuna_options=None,

cpus=None,

gpus=None,

)

LightningModule

Class for Automatic Hyperparameter Optimization, it builds on top of ray to

give access to a wide variety of hyperparameter optimization tools ranging

from classic grid search, to Bayesian optimization and HyperBand algorithm.

The validation loss to be optimized is defined by the config['loss'] dictionary

value, the config also contains the rest of the hyperparameter search space.

It is important to note that the success of this hyperparameter optimization

heavily relies on a strong correlation between the validation and test periods.

Parameters:

| Name | Type | Description | Default |

|---|

cls_model | PyTorch/PyTorchLightning model | See neuralforecast.models collection here. | required |

h | int | Forecast horizon | required |

loss | PyTorch module | Instantiated train loss class from losses collection. | required |

valid_loss | PyTorch module | Instantiated valid loss class from losses collection. | required |

config | dict or callable | Dictionary with ray.tune defined search space or function that takes an optuna trial and returns a configuration dict. | required |

search_alg | ray.tune.search variant or optuna.sampler | For ray see https://docs.ray.io/en/latest/tune/api_docs/suggestion.html For optuna see https://optuna.readthedocs.io/en/stable/reference/samplers/index.html. | BasicVariantGenerator(random_state=1) |

num_samples | int | Number of hyperparameter optimization steps/samples. | 10 |

time_budget | int | Time budget in seconds for the hyperparameter search. | None |

refit_with_val | bool | Refit of best model should preserve val_size. | False |

verbose | bool | Track progress. | False |

alias | str | Custom name of the model. | None |

backend | str | Backend to use for searching the hyperparameter space, can be either ‘ray’ or ‘optuna’. | ‘ray’ |

callbacks | list of callable | List of functions to call during the optimization process. ray reference: https://docs.ray.io/en/latest/tune/tutorials/tune-metrics.html optuna reference: https://optuna.readthedocs.io/en/stable | None |

ray_options | RayOptions | Container for Ray-only options. See RayOptions for the supported fields (run_config, scheduler, cpus, gpus). Only used with backend='ray'. | None |

optuna_options | OptunaOptions | Container for Optuna-only options. See OptunaOptions for the supported fields (study_kwargs, create_study_kwargs). Only used with backend='optuna'. | None |

cpus | | Deprecated, will be removed in v3.2.0. Pass ray_options=RayOptions(cpus=...) instead. | None |

gpus | | Deprecated, will be removed in v3.2.0. Pass ray_options=RayOptions(gpus=...) instead. | None |

BaseAuto.fit

fit(

dataset, val_size=0, test_size=0, random_seed=None, distributed_config=None

)

config.

The optimization is performed on the TimeSeriesDataset using temporal cross validation with

the validation set that sequentially precedes the test set.

Parameters:

| Name | Type | Description | Default |

|---|

dataset | NeuralForecast’s TimeSeriesDataset | NeuralForecast’s TimeSeriesDataset see details here | required |

val_size | int | Size of temporal validation set (needs to be bigger than 0). | 0 |

test_size | int | Size of temporal test set (default 0). | 0 |

random_seed | int | Random seed for hyperparameter exploration algorithms, not yet implemented. | None |

| Name | Type | Description |

|---|

self | | Fitted instance of BaseAuto with best hyperparameters and results. |

BaseAuto.predict

predict(dataset, step_size=1, h=None, **data_kwargs)

| Name | Type | Description | Default |

|---|

dataset | NeuralForecast’s TimeSeriesDataset | NeuralForecast’s TimeSeriesDataset see details here | required |

step_size | int | Steps between sequential predictions, (default 1). | 1 |

h | int | Prediction horizon, if None, uses the model’s fitted horizon. Defaults to None. | None |

**data_kwarg | | Additional parameters for the dataset module. | required |

| Name | Type | Description |

|---|

y_hat | | Numpy predictions of the NeuralForecast model. |

Usage Example

class RayLogLossesCallback(tune.Callback):

def on_trial_complete(self, iteration, trials, trial, **info):

result = trial.last_result

print(40 * '-' + 'Trial finished' + 40 * '-')

print(f'Train loss: {result["train_loss"]:.2f}. Valid loss: {result["loss"]:.2f}')

print(80 * '-')

config = {

"hidden_size": tune.choice([512]),

"num_layers": tune.choice([3, 4]),

"input_size": 12,

"max_steps": 10,

"val_check_steps": 5

}

auto = BaseAuto(h=12, loss=MAE(), valid_loss=MSE(), cls_model=MLP, config=config, num_samples=2, cpus=1, gpus=0, callbacks=[RayLogLossesCallback()])

auto.fit(dataset=dataset)

y_hat = auto.predict(dataset=dataset)

assert mae(Y_test_df['y'].values, y_hat[:, 0]) < 200

def config_f(trial):

return {

"hidden_size": trial.suggest_categorical('hidden_size', [512]),

"num_layers": trial.suggest_categorical('num_layers', [3, 4]),

"input_size": 12,

"max_steps": 10,

"val_check_steps": 5

}

class OptunaLogLossesCallback:

def __call__(self, study, trial):

metrics = trial.user_attrs['METRICS']

print(40 * '-' + 'Trial finished' + 40 * '-')

print(f'Train loss: {metrics["train_loss"]:.2f}. Valid loss: {metrics["loss"]:.2f}')

print(80 * '-')

auto2 = BaseAuto(h=12, loss=MAE(), valid_loss=MSE(), cls_model=MLP, config=config_f, search_alg=optuna.samplers.RandomSampler(), num_samples=2, backend='optuna', callbacks=[OptunaLogLossesCallback()])

auto2.fit(dataset=dataset)

assert isinstance(auto2.results, optuna.Study)

y_hat2 = auto2.predict(dataset=dataset)

assert mae(Y_test_df['y'].values, y_hat2[:, 0]) < 200

References

- James Bergstra, Remi Bardenet, Yoshua Bengio, and Balazs Kegl

(2011). “Algorithms for Hyper-Parameter Optimization”. In: Advances

in Neural Information Processing Systems. url:

https://proceedings.neurips.cc/paper/2011/file/86e8f7ab32cfd12577bc2619bc635690-Paper.pdf

- Kirthevasan Kandasamy, Karun Raju Vysyaraju, Willie Neiswanger,

Biswajit Paria, Christopher R. Collins, Jeff Schneider, Barnabas

Poczos, Eric P. Xing (2019). “Tuning Hyperparameters without Grad

Students: Scalable and Robust Bayesian Optimisation with Dragonfly”.

Journal of Machine Learning Research. url:

https://arxiv.org/abs/1903.06694

- Lisha Li, Kevin Jamieson, Giulia DeSalvo, Afshin Rostamizadeh,

Ameet Talwalkar (2016). “Hyperband: A Novel Bandit-Based Approach to

Hyperparameter Optimization”. Journal of Machine Learning Research.

url:

https://arxiv.org/abs/1603.06560