Documentation Index

Fetch the complete documentation index at: https://nixtlaverse.nixtla.io/llms.txt

Use this file to discover all available pages before exploring further.

Introduction

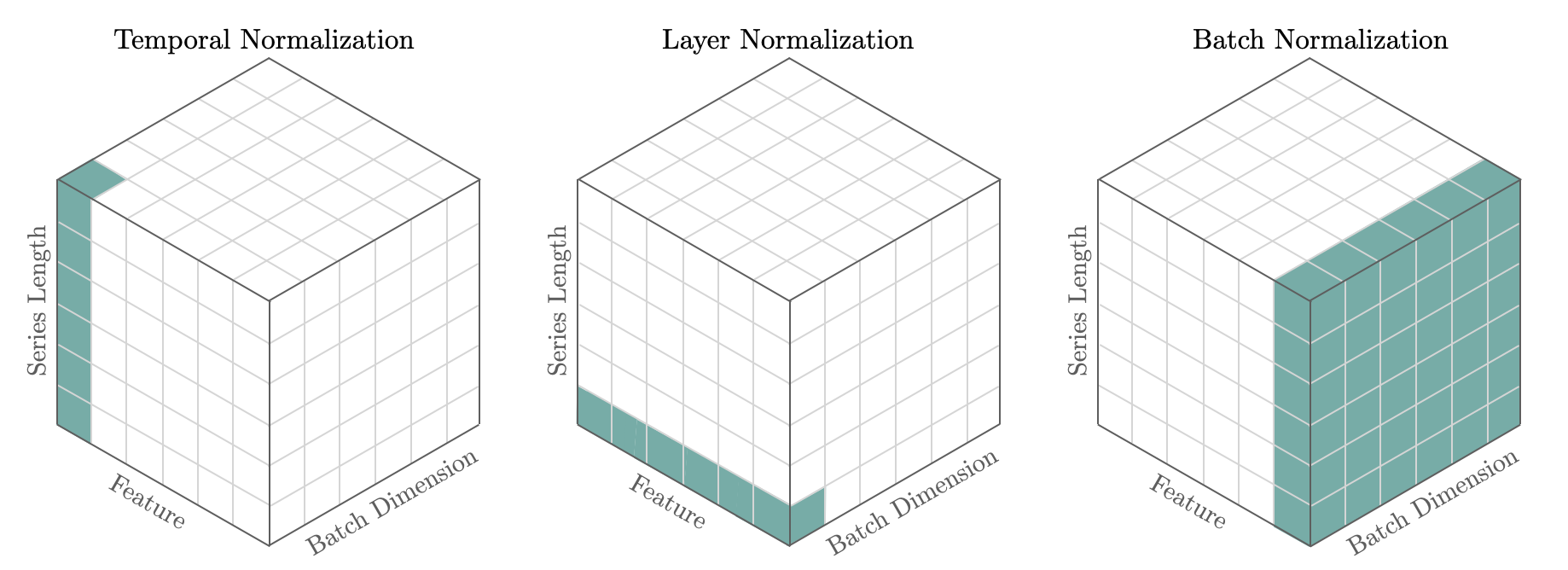

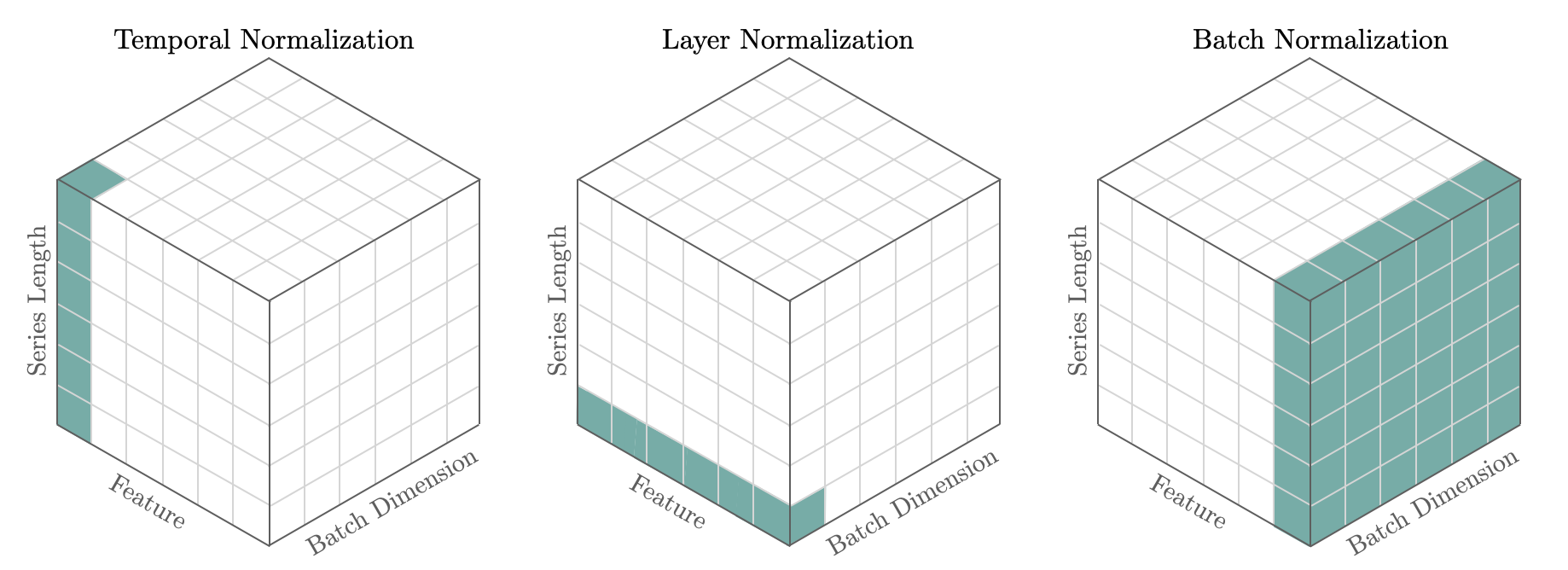

Temporal normalization has proven to be essential in neural forecasting tasks, as it enables network’s non-linearities to express themselves. Forecasting scaling methods take particular interest in the temporal dimension where most

of the variance dwells, contrary to other deep learning techniques like

BatchNorm that normalizes across batch and temporal dimensions, and

LayerNorm that normalizes across the feature dimension. Currently we support the following techniques: std, median, norm, norm1, invariant,

revin.

References

Figure 1. Illustration of temporal normalization (left), layer normalization (center) and batch normalization (right). The entries in green show the components used to compute the normalizing statistics.

Figure 1. Illustration of temporal normalization (left), layer normalization (center) and batch normalization (right). The entries in green show the components used to compute the normalizing statistics.

1. Auxiliary Functions

masked_median(x, mask, dim=-1, keepdim=True)

x along dim, ignoring values where

mask is False. x and mask need to be broadcastable.

Parameters:

| Name | Type | Description | Default |

|---|

x | Tensor | Tensor to compute median of along dim dimension. | required |

mask | Tensor | Tensor bool with same shape as x, where x is valid and False where x should be masked. Mask should not be all False in any column of dimension dim to avoid NaNs from zero division. | required |

dim | int | Dimension to take median of. Defaults to -1. | -1 |

keepdim | bool | Keep dimension of x or not. Defaults to True. | True |

| Type | Description |

|---|

| torch.Tensor: Normalized values. | |

masked_mean

masked_mean(x, mask, dim=-1, keepdim=True)

x along dimension, ignoring values where

mask is False. x and mask need to be broadcastable.

Parameters:

| Name | Type | Description | Default |

|---|

x | Tensor | Tensor to compute mean of along dim dimension. | required |

mask | Tensor | Tensor bool with same shape as x, where x is valid and False where x should be masked. Mask should not be all False in any column of dimension dim to avoid NaNs from zero division. | required |

dim | int | Dimension to take mean of. Defaults to -1. | -1 |

keepdim | bool | Keep dimension of x or not. Defaults to True. | True |

| Type | Description |

|---|

| torch.Tensor: Normalized values. | |

2. Scalers

minmax_statistics

minmax_statistics(x, mask, eps=1e-06, dim=-1)

| Name | Type | Description | Default |

|---|

x | Tensor | Input tensor. | required |

mask | Tensor | Tensor bool, same dimension as x, indicates where x is valid and False where x should be masked. Mask should not be all False in any column of dimension dim to avoid NaNs from zero division. | required |

eps | float | Small value to avoid division by zero. Defaults to 1e-6. | 1e-06 |

dim | int | Dimension over to compute min and max. Defaults to -1. | -1 |

| Type | Description |

|---|

torch.Tensor: Same shape as x, except scaled. | |

minmax1_statistics

minmax1_statistics(x, mask, eps=1e-06, dim=-1)

| Name | Type | Description | Default |

|---|

x | Tensor | Input tensor. | required |

mask | Tensor | Tensor bool, same dimension as x, indicates where x is valid and False where x should be masked. Mask should not be all False in any column of dimension dim to avoid NaNs from zero division. | required |

eps | float | Small value to avoid division by zero. Defaults to 1e-6. | 1e-06 |

dim | int | Dimension over to compute min and max. Defaults to -1. | -1 |

| Type | Description |

|---|

torch.Tensor: Same shape as x, except scaled. | |

std_statistics

std_statistics(x, mask, dim=-1, eps=1e-06)

dim dimension.

For example, for base_windows models, the scaled features are obtained as (with dim=1):

z=(x[B,T,C]−xˉ[B,1,C])/σ^[B,1,C]

Parameters:

| Name | Type | Description | Default |

|---|

x | Tensor | Input tensor. | required |

mask | Tensor | Tensor bool, same dimension as x, indicates where x is valid and False where x should be masked. Mask should not be all False in any column of dimension dim to avoid NaNs from zero division. | required |

eps | float | Small value to avoid division by zero. Defaults to 1e-6. | 1e-06 |

dim | int | Dimension over to compute mean and std. Defaults to -1. | -1 |

| Type | Description |

|---|

torch.Tensor: Same shape as x, except scaled. | |

robust_statistics

robust_statistics(x, mask, dim=-1, eps=1e-06)

base_windows models, the scaled features are obtained as (with dim=1):

z=(x[B,T,C]−median(x)[B,1,C])/mad(x)[B,1,C]

mad(x)=N1∑∣x−median(x)∣

Parameters:

| Name | Type | Description | Default |

|---|

x | Tensor | Input tensor. | required |

mask | Tensor | Tensor bool, same dimension as x, indicates where x is valid and False where x should be masked. Mask should not be all False in any column of dimension dim to avoid NaNs from zero division. | required |

eps | float | Small value to avoid division by zero. Defaults to 1e-6. | 1e-06 |

dim | int | Dimension over to compute median and mad. Defaults to -1. | -1 |

| Type | Description |

|---|

torch.Tensor: Same shape as x, except scaled. | |

invariant_statistics

invariant_statistics(x, mask, dim=-1, eps=1e-06)

base_windows models, the scaled features are obtained as (with dim=1):

z=(x[B,T,C]−median(x)[B,1,C])/mad(x)[B,1,C]

z=arcsinh(z)

Parameters:

| Name | Type | Description | Default |

|---|

x | Tensor | Input tensor. | required |

mask | Tensor | Tensor bool, same dimension as x, indicates where x is valid and False where x should be masked. Mask should not be all False in any column of dimension dim to avoid NaNs from zero division. | required |

eps | float | Small value to avoid division by zero. Defaults to 1e-6. | 1e-06 |

dim | int | Dimension over to compute median and mad. Defaults to -1. | -1 |

| Type | Description |

|---|

torch.Tensor: Same shape as x, except scaled. | |

identity_statistics

identity_statistics(x, mask, dim=-1, eps=1e-06)

| Name | Type | Description | Default |

|---|

x | Tensor | Input tensor. | required |

mask | Tensor | Tensor bool, same dimension as x, indicates where x is valid and False where x should be masked. Mask should not be all False in any column of dimension dim to avoid NaNs from zero division. | required |

eps | float | Small value to avoid division by zero. Defaults to 1e-6. | 1e-06 |

dim | int | Dimension over to compute median and mad. Defaults to -1. | -1 |

| Type | Description |

|---|

torch.Tensor: Original x. | |

3. TemporalNorm Module

TemporalNorm

TemporalNorm(scaler_type='robust', dim=-1, eps=1e-06, num_features=None)

Module

Temporal Normalization

Standardization of the features is a common requirement for many

machine learning estimators, and it is commonly achieved by removing

the level and scaling its variance. The TemporalNorm module applies

temporal normalization over the batch of inputs as defined by the type of scaler.

z[B,T,C]=Scaler(x[B,T,C])

If scaler_type is revin learnable normalization parameters are added on top of

the usual normalization technique, the parameters are learned through scale decouple

global skip connections. The technique is available for point and probabilistic outputs.

z^[B,T,C]=γ^[1,1,C]z[B,T,C]+β^[1,1,C]

Parameters:

| Name | Type | Description | Default |

|---|

scaler_type | str | Defines the type of scaler used by TemporalNorm. Available [identity, standard, robust, minmax, minmax1, invariant, revin]. Defaults to “robust”. | ‘robust’ |

dim (int, optional): Dimension over to compute scale and shift. Defaults to -1.

eps (float, optional): Small value to avoid division by zero. Defaults to 1e-6.

num_features (int, optional): For RevIN-like learnable affine parameters initialization. Defaults to None.

Center and scale the data.

Parameters:

| Name | Type | Description | Default |

|---|

x | Tensor | Tensor shape [batch, time, channels]. | required |

mask | Tensor | Tensor bool, shape [batch, time] where x is valid and False where x should be masked. Mask should not be all False in any column of dimension dim to avoid NaNs from zero division. | required |

| Type | Description |

|---|

torch.Tensor: Same shape as x, except scaled. | |

inverse_transform(z, x_shift=None, x_scale=None)

| Name | Type | Description | Default |

|---|

z | Tensor | Tensor shape [batch, time, channels], scaled. | required |

x_shift | Tensor | Tensor shape [1, 1, channels], shift. Defaults to None. | None |

x_scale | Tensor | Tensor shape [1, 1, channels], scale. Defaults to None. | None |

| Type | Description |

|---|

| torch.Tensor: Original data. | |

Example

# Declare synthetic batch to normalize

x1 = 10**0 * np.arange(36)[:, None]

x2 = 10**1 * np.arange(36)[:, None]

np_x = np.concatenate([x1, x2], axis=1)

np_x = np.repeat(np_x[None, :,:], repeats=2, axis=0)

np_x[0,:,:] = np_x[0,:,:] + 100

np_mask = np.ones(np_x.shape)

np_mask[:, -12:, :] = 0

print(f'x.shape [batch, time, features]={np_x.shape}')

print(f'mask.shape [batch, time, features]={np_mask.shape}')

# Validate scalers

x = 1.0*torch.tensor(np_x)

mask = torch.tensor(np_mask)

scaler = TemporalNorm(scaler_type='standard', dim=1)

x_scaled = scaler.transform(x=x, mask=mask)

x_recovered = scaler.inverse_transform(x_scaled)

plt.plot(x[0,:,0], label='x1', color='#78ACA8')

plt.plot(x[0,:,1], label='x2', color='#E3A39A')

plt.title('Before TemporalNorm')

plt.xlabel('Time')

plt.legend()

plt.show()

plt.plot(x_scaled[0,:,0], label='x1', color='#78ACA8')

plt.plot(x_scaled[0,:,1]+0.1, label='x2+0.1', color='#E3A39A')

plt.title(f'TemporalNorm \'{scaler.scaler_type}\' ')

plt.xlabel('Time')

plt.legend()

plt.show()

plt.plot(x_recovered[0,:,0], label='x1', color='#78ACA8')

plt.plot(x_recovered[0,:,1], label='x2', color='#E3A39A')

plt.title('Recovered')

plt.xlabel('Time')

plt.legend()

plt.show()