Documentation Index

Fetch the complete documentation index at: https://nixtlaverse.nixtla.io/llms.txt

Use this file to discover all available pages before exploring further.

Generate correlated sample paths for scenario analysis

Simulation vs. Prediction Intervals: When to Use Which

NeuralForecast provides two complementary approaches for reasoning about future uncertainty:Prediction Intervals (predict(level=...))

Prediction intervals answer: “What range of values is the target

likely to fall within at each future time step?”

- Output: Independent confidence bands per horizon step (e.g., 90% interval at step ).

- Each step is treated marginally — no information about how steps relate to each other.

- Best for: monitoring, alerting, dashboarding, reporting confidence bands.

Simulation Paths (simulate(...))

Simulation paths answer: “What are realistic joint future

trajectories the time series could follow?”

- Output: complete trajectories of length , each representing a plausible future.

- Temporal correlations between steps are preserved — if step is high, step is likely high too.

- Best for: scenario analysis, portfolio optimization, supply chain planning, energy dispatch, risk assessment (VaR/CVaR), stochastic programming.

Key Difference: Marginal vs. Joint

Consider electricity price forecasting over 24 hours. A 90% prediction interval tells you the price at hour 12 will likely be between $40 and $80. But it says nothing about whether hours 11, 12, and 13 will all be high simultaneously (a sustained price spike) or whether high and low values alternate randomly. Simulation paths capture this joint structure. From 500 simulated paths you can compute: - P(total cost > budget) — requires summing across correlated hours - P(at least 3 consecutive hours above $70) — requires temporal ordering - Expected shortfall (CVaR) — requires the joint tail distribution These questions cannot be answered from marginal prediction intervals alone.Available Simulation Methods

| Method | Temporal Correlation | Description |

|---|---|---|

gaussian_copula (default) | AR(1) Gaussian copula | Draws correlated uniform samples via Cholesky decomposition, maps through marginal CDFs. Parametric correlation structure. |

schaake_shuffle | Empirical (historical templates) | Draws independent marginal samples, reorders them to match rank structure of historical trajectory templates. Nonparametric — captures arbitrary dependence. |

DistributionLoss (Normal, StudentT,

Poisson, etc.) and mixture losses (GMM, PMM, NBMM) — produce

arbitrary quantiles natively. - MQLoss / HuberMQLoss — uses

the model’s trained quantile grid. - IQLoss / HuberIQLoss —

evaluates multiple quantiles via repeated forward passes. - Point

losses (MAE, MSE, etc.) — requires prediction_intervals

(Conformal Prediction) set during fit() to build a quantile grid from

calibration scores.

1. Setup

2. Load Data

We use the AirPassengers dataset — a classic monthly time series of airline passenger counts.| unique_id | ds | y | |

|---|---|---|---|

| 127 | 1.0 | 1959-08-31 | 559.0 |

| 128 | 1.0 | 1959-09-30 | 463.0 |

| 129 | 1.0 | 1959-10-31 | 407.0 |

| 130 | 1.0 | 1959-11-30 | 362.0 |

| 131 | 1.0 | 1959-12-31 | 405.0 |

3. Train Models

We train five models showcasing different loss types: - NHITS + GMM — Gaussian Mixture Model, a mixtureDistributionLoss. - NHITS +

DistributionLoss(Normal) — parametric distribution output. - NHITS +

MQLoss — Multi-Quantile Loss, directly optimizes quantile levels. -

NHITS + IQLoss — Implicit Quantile Loss, can evaluate any quantile

at inference. - NHITS + MAE with Conformal Prediction — point-loss

model with calibrated prediction intervals.

Since the MAE model needs conformal prediction intervals, we pass

prediction_intervals to fit().

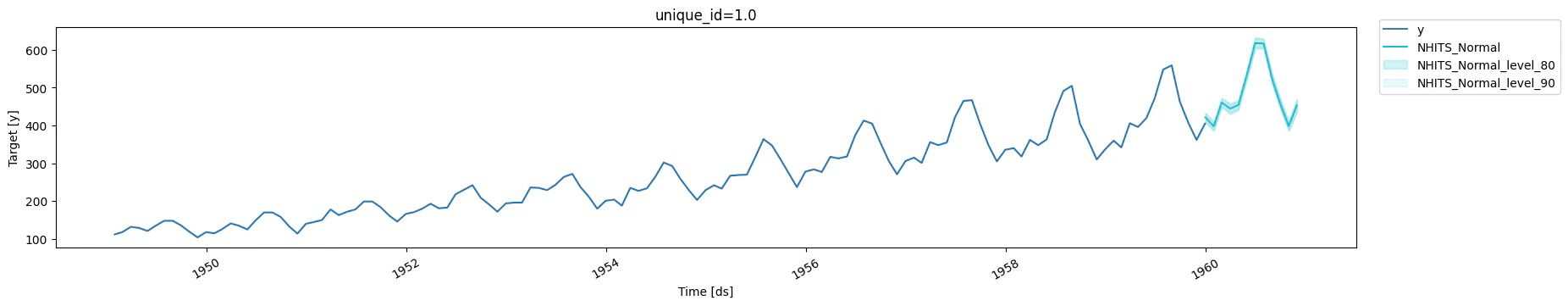

4. Prediction Intervals (Baseline)

First, let’s see the standard prediction intervals — these are marginal (per-step) uncertainty bands.

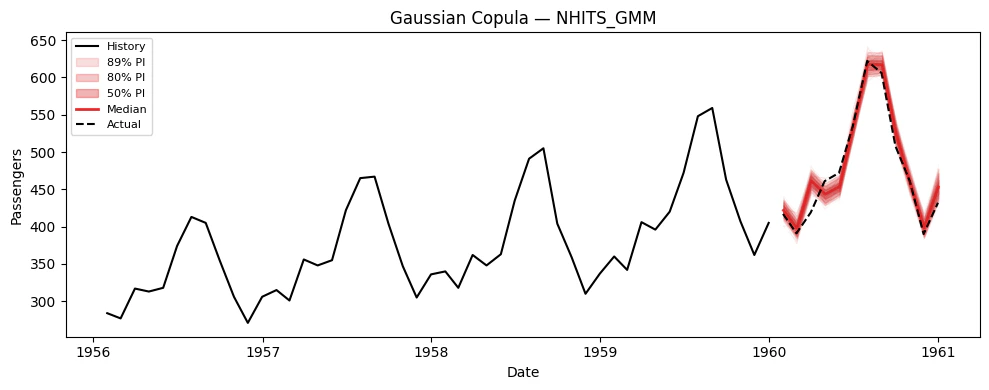

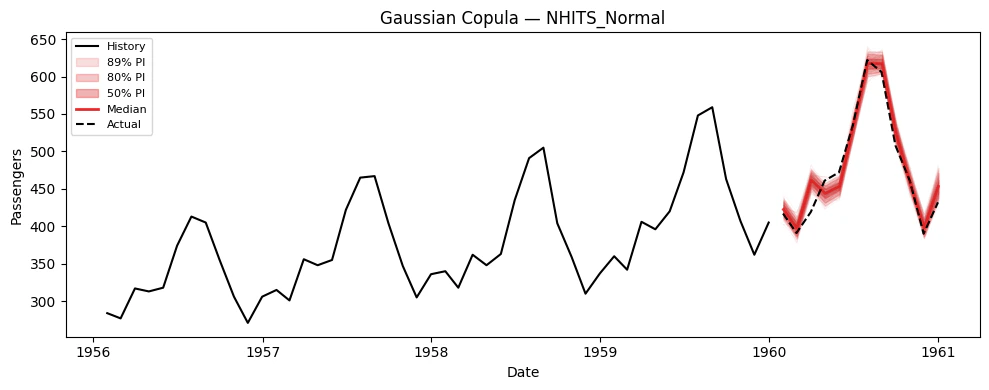

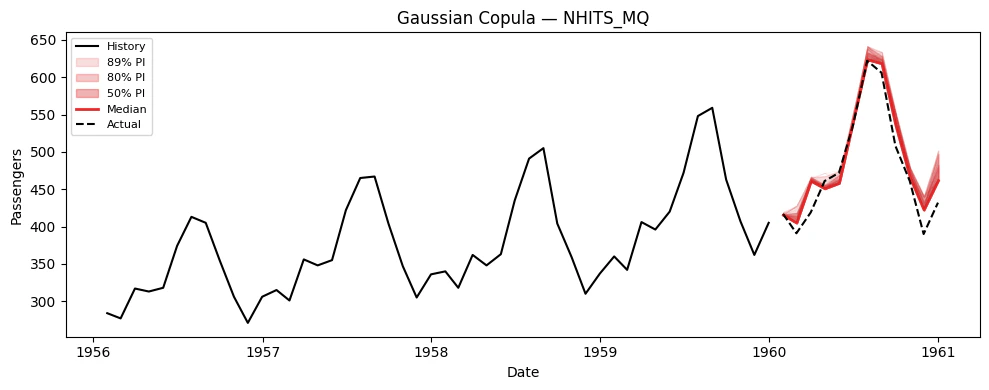

5. Simulation Paths

Now let’s generate correlated simulation paths using thesimulate()

method. This returns a long-format DataFrame with columns

[unique_id, ds, sample_id, model_1, model_2, ...].

Plotting helper

Generate simulation paths

simulate() generates n_paths correlated sample paths for every model

× series combination.

| unique_id | ds | sample_id | NHITS_GMM | NHITS_Normal | NHITS_MQ | NHITS_IQL | NHITS_MAE | |

|---|---|---|---|---|---|---|---|---|

| 0 | 1.0 | 1960-01-01 | 0 | 424.577554 | 424.765725 | 415.206802 | 409.314326 | 438.886536 |

| 1 | 1.0 | 1960-02-01 | 0 | 404.827416 | 404.260575 | 414.203812 | 388.755778 | 416.497602 |

| 2 | 1.0 | 1960-03-01 | 0 | 469.169496 | 469.978479 | 464.367880 | 466.584470 | 482.319912 |

| 3 | 1.0 | 1960-04-01 | 0 | 450.245044 | 451.145088 | 454.416200 | 462.261074 | 459.493987 |

| 4 | 1.0 | 1960-05-01 | 0 | 472.641135 | 471.130120 | 475.350769 | 486.486899 | 493.030374 |

| 5 | 1.0 | 1960-06-01 | 0 | 528.117486 | 527.280406 | 539.266724 | 497.874372 | 530.030655 |

| 6 | 1.0 | 1960-07-01 | 0 | 594.137207 | 592.315796 | 623.242188 | 507.372412 | 586.462607 |

| 7 | 1.0 | 1960-08-01 | 0 | 604.532895 | 604.907234 | 617.977478 | 531.723887 | 583.457802 |

| 8 | 1.0 | 1960-09-01 | 0 | 530.447128 | 529.882652 | 545.858492 | 566.198389 | 524.067964 |

| 9 | 1.0 | 1960-10-01 | 0 | 458.686895 | 458.105852 | 466.378017 | 466.831645 | 468.689723 |

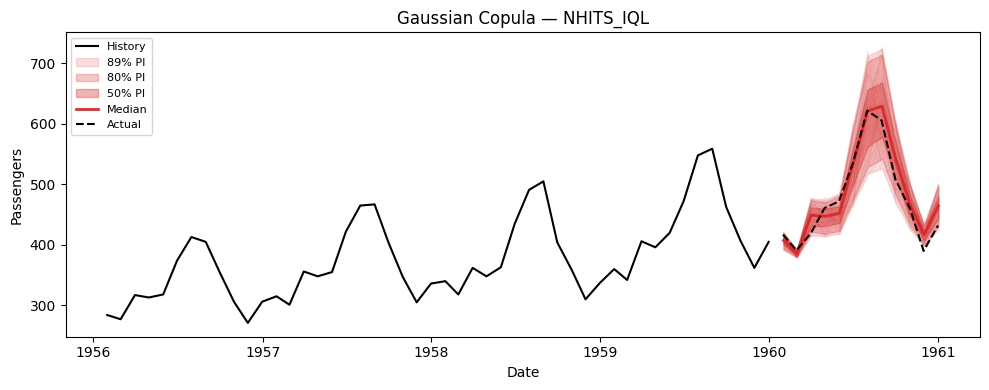

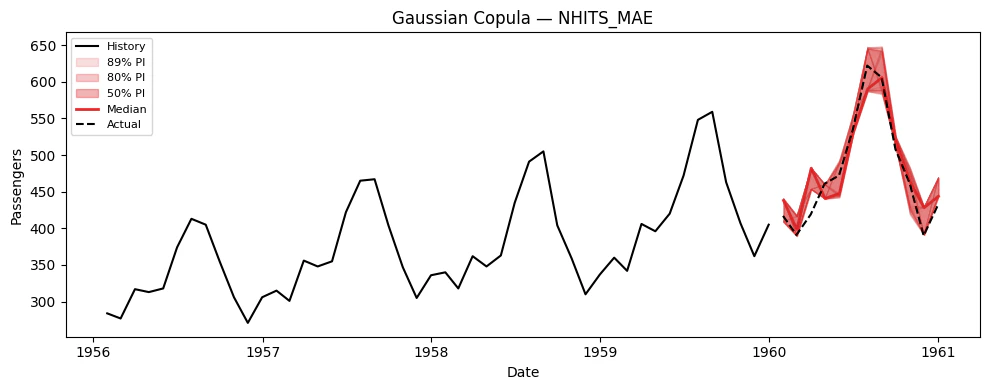

Visualize paths for each model

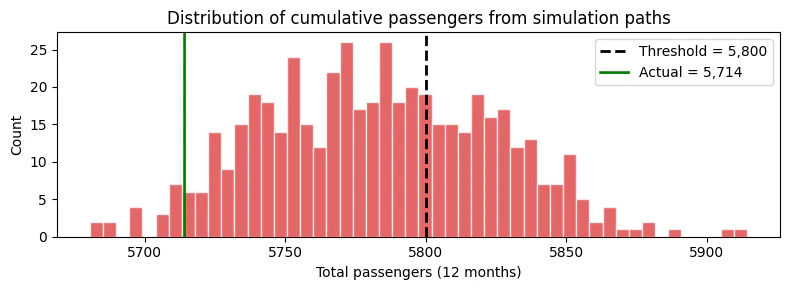

6. Using Simulation Paths for Decision-Making

The key advantage of simulation paths over prediction intervals is answering joint probabilistic questions. Here are concrete examples.Example: Probability of exceeding a cumulative threshold

Suppose we have a capacity of 5,800 total passengers over the next 12 months. What is the probability of exceeding this capacity? This question requires the joint distribution — it cannot be answered from marginal intervals.

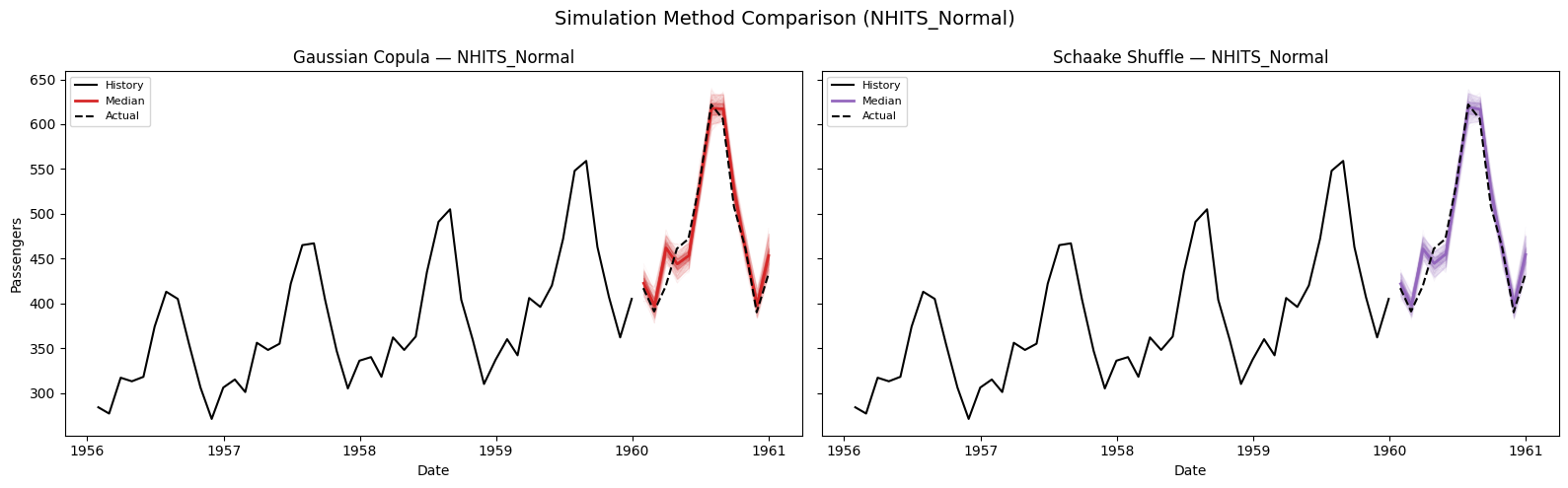

7. Comparing Simulation Methods: Gaussian Copula vs. Schaake Shuffle

Both methods use the same marginal quantile forecasts but differ in how they introduce temporal correlation across horizon steps. Let’s compare them on the NHITS_Normal model.

8. Evaluating Marginal vs. Joint Distributions

We can evaluate both the marginal distribution (frompredict(quantiles=...)) and the joint distribution (from

simulate()) using the same metrics. For each model we compare:

- Point metrics: MAE, MSE on the median forecast.

- Probabilistic metric: scaled CRPS on the quantile forecasts —

either from

predict(marginal) or derived from simulation paths (joint).

| Marginal (predict) | Joint — Gaussian Copula | Joint — Schaake Shuffle | |

|---|---|---|---|

| Metric | |||

| MAE | 12.669571 | 12.942172 | 12.516764 |

| MSE | 276.954919 | 288.357154 | 275.652889 |

| Scaled CRPS | 0.021440 | 0.022133 | 0.021545 |

- MAE / MSE (point metrics): Both simulation methods use the median of the sample paths as their point forecast. Since the marginal quantiles and simulation-derived quantiles share the same underlying model, the median forecasts are similar but not identical — simulation introduces sampling variability.

- Scaled CRPS (probabilistic metric): Measures the quality of the

full quantile distribution. The marginal quantiles come directly

from the model’s predictive distribution, while the

simulation-derived quantiles are empirical (computed from

n_pathssamples). With enough paths, the simulation CRPS should converge to the marginal CRPS.

9. Summary

| Question | Use |

|---|---|

| “What range is step likely in?” | predict(level=...) — prediction intervals |

| “What are realistic joint future trajectories?” | simulate(n_paths=...) — sample paths |

| “What is P(total > threshold)?” | simulate() then sum paths |

| “What is P(3 consecutive spikes)?” | simulate() then check path patterns |

| “What is the CVaR of my portfolio?” | simulate() then compute tail statistics |

Simulation Methods

| Method | Use when… |

|---|---|

gaussian_copula (default) | You want smooth, parametric temporal correlation (AR(1) structure). Fast and robust. |

schaake_shuffle | You want nonparametric dependence from historical data. Captures asymmetric / non-Gaussian correlation patterns. |

Compatible Losses

| Loss type | How quantiles are obtained |

|---|---|

DistributionLoss, GMM, PMM, NBMM | Arbitrary quantiles from parametric distribution |

MQLoss / HuberMQLoss | Uses the model’s trained quantile grid |

IQLoss / HuberIQLoss | Multiple forward passes, one per quantile |

Point losses (MAE, MSE, etc.) | Conformal Prediction intervals (requires prediction_intervals during fit()) |

References

- Baron, E. et al. (2025). Efficiently generating correlated sample paths from multi-step time series foundation models. NeurIPS 2025 Workshop.

- Clark, M. et al. (2004). The Schaake shuffle: A method for reconstructing space-time variability. Journal of Hydrometeorology, 5(1).