Transfer learning refers to the process of pre-training a flexible model on a large dataset and using it later on other data with little to no training. It is one of the most outstanding 🚀 achievements in Machine Learning and has many practical applications. For time series forecasting, the technique allows you to get lightning-fast predictions ⚡ bypassing the tradeoff between accuracy and speed (more than 30 times faster than our already fast AutoARIMA for a similar accuracy). This notebook shows how to generate a pre-trained model to forecast new time series never seen by the model. Table of ContentsDocumentation Index

Fetch the complete documentation index at: https://nixtlaverse.nixtla.io/llms.txt

Use this file to discover all available pages before exploring further.

- Installing MLForecast

- Load M3 Monthly Data

- Instantiate NeuralForecast core, Fit, and save

- Use the pre-trained model to predict on AirPassengers

- Evaluate Results

Installing Libraries

Load M3 Data

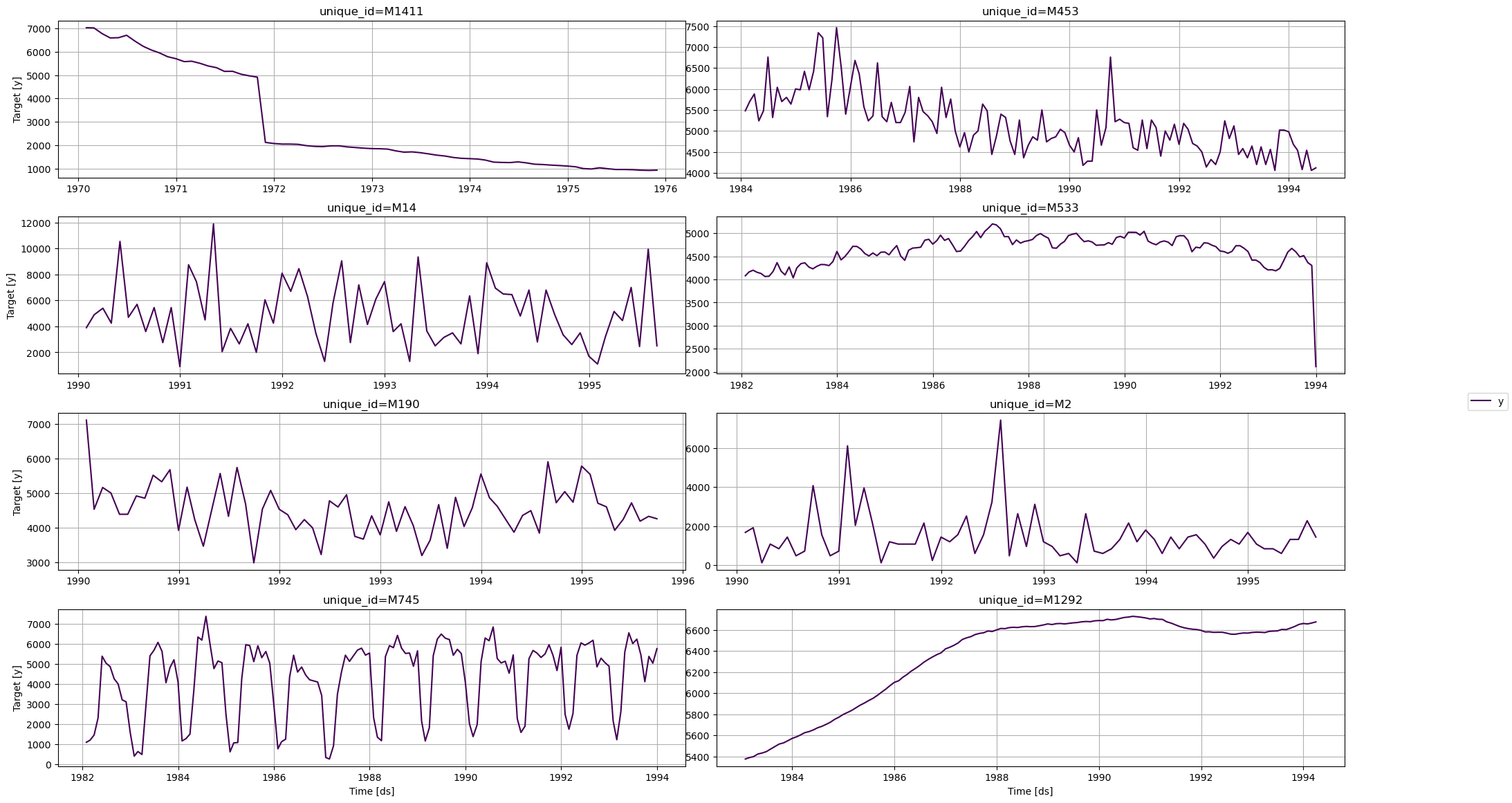

TheM3 class will automatically download the complete M3 dataset and

process it.

It return three Dataframes: Y_df contains the values for the target

variables, X_df contains exogenous calendar features and S_df

contains static features for each time-series. For this example we will

only use Y_df.

If you want to use your own data just replace Y_df. Be sure to use a

long format and have a similar structure than our data set.

1_000 series to speed up

computations. Remove the filter to use the whole dataset.

Model Training

Using theMLForecast.fit method you can train a set of models to your

dataset. You can modify the hyperparameters of the model to get a better

accuracy, in this case we will use the default hyperparameters of

lgb.LGBMRegressor.

MLForecast object has the following parameters:

models: a list of sklearn-like (fitandpredict) models.freq: a string indicating the frequency of the data. See panda’s available frequencies.differences: Differences to take of the target before computing the features. These are restored at the forecasting step.lags: Lags of the target to use as features.

differences and lags to produce

features. See the full

documentation to see

all available features.

Any settings are passed into the constructor. Then you call its fit

method and pass in the historical data frame Y_df_M3.

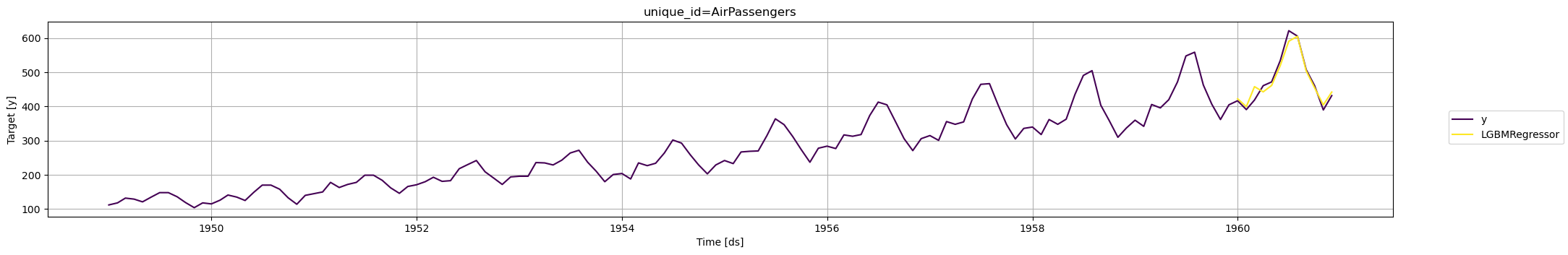

Transfer M3 to AirPassengers

Now we can transfer the trained model to forecastAirPassengers with

the MLForecast.predict method, we just have to pass the new dataframe

to the new_data argument.

| unique_id | ds | LGBMRegressor | |

|---|---|---|---|

| 0 | AirPassengers | 1960-01-01 | 422.740096 |

| 1 | AirPassengers | 1960-02-01 | 399.480193 |

| 2 | AirPassengers | 1960-03-01 | 458.220289 |

| 3 | AirPassengers | 1960-04-01 | 442.960385 |

| 4 | AirPassengers | 1960-05-01 | 461.700482 |

Evaluate Results

We evaluate the forecasts of the pre-trained model with the Mean Absolute Error (mae).