Tutorial on how to achieve a full control of the

configure_optimizers() behavior of NeuralForecast models

NeuralForecast models allow us to customize the default optimizer and

learning rate scheduler behaviors via optimizer, optimizer_kwargs,

lr_scheduler, lr_scheduler_kwargs. However this is not sufficient to

support the use of

ReduceLROnPlateau,

for instance, as it requires the specification of monitor parameter.

This tutorial provides an example of how to support the use of

ReduceLROnPlateau.

Load libraries

Data

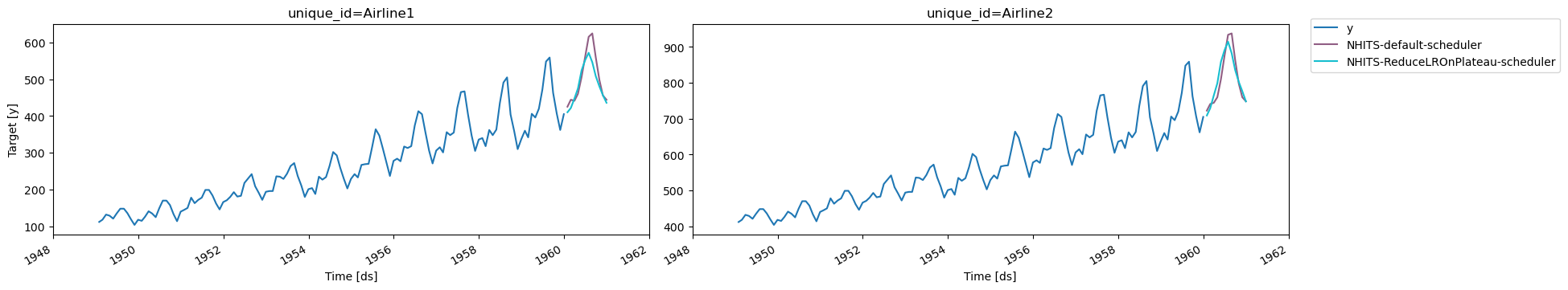

We use the AirPassengers dataset for the demonstration of conformal prediction.Model training

We now train a NHITS model on the above dataset. We consider two different predictions: 1. Training using the defaultconfigure_optimizers(). 2. Training by overwriting the

configure_optimizers() of the subclass of NHITS model.

configure_optimizers().