Hierarchical Forecast’s reconciliation and evaluation.This notebook offers a step to step guide to create a hierarchical forecasting pipeline. In the pipeline we will use

HierarchicalForecast and StatsForecast

core class, to create base predictions, reconcile and evaluate them.

We will use the TourismL dataset that summarizes large Australian

national visitor survey.

Outline 1. Installing Packages 2. Prepare TourismL dataset - Read and

aggregate - StatsForecast’s Base Predictions 3. Reconciliar 4. Evaluar

1. Installing HierarchicalForecast

We assume you have StatsForecast and HierarchicalForecast already installed, if not check this guide for instructions on how to install HierarchicalForecast.2. Preparing TourismL Dataset

2.1 Read Hierarchical Dataset

| unique_id | ds | y | |

|---|---|---|---|

| 0 | total | 1998-03-31 | 84503 |

| 1 | total | 1998-06-30 | 65312 |

| 2 | total | 1998-09-30 | 72753 |

| 3 | total | 1998-12-31 | 70880 |

| 4 | total | 1999-03-31 | 86893 |

| … | … | … | … |

| 3191 | nt-oth-noncity | 2003-12-31 | 132 |

| 3192 | nt-oth-noncity | 2004-03-31 | 12 |

| 3193 | nt-oth-noncity | 2004-06-30 | 40 |

| 3194 | nt-oth-noncity | 2004-09-30 | 186 |

| 3195 | nt-oth-noncity | 2004-12-31 | 144 |

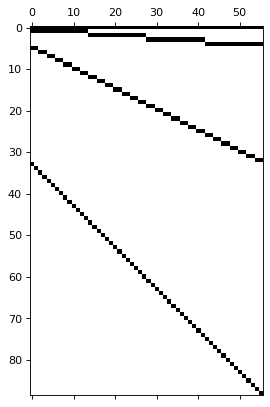

2.2 StatsForecast’s Base Predictions

This cell computes the base predictionsY_hat_df for all the series in

Y_df using StatsForecast’s AutoARIMA. Additionally we obtain

insample predictions Y_fitted_df for the methods that require them.

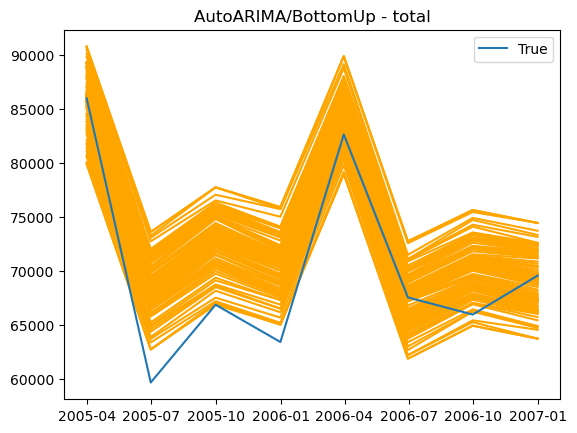

3. Reconcile Predictions

4. Evaluation

| level | metric | AutoARIMA/BottomUp | AutoARIMA/TopDown_method-average_proportions | AutoARIMA/TopDown_method-proportion_averages | AutoARIMA/MinTrace_method-ols | AutoARIMA/MinTrace_method-wls_var | AutoARIMA/MinTrace_method-mint_shrink | AutoARIMA/ERM_method-closed_lambda_reg-0.01 | |

|---|---|---|---|---|---|---|---|---|---|

| 0 | Country | msse | 1.777±0.0 | 2.488±0.0 | 2.488±0.0 | 2.752±0.0 | 2.569±0.0 | 2.775±0.0 | 3.427±0.0 |

| 2 | Country/Purpose | msse | 1.726±0.0 | 3.181±0.0 | 3.169±0.0 | 2.184±0.0 | 1.876±0.0 | 1.96±0.0 | 3.067±0.0 |

| 4 | Country/Purpose/State | msse | 0.881±0.0 | 1.657±0.0 | 1.652±0.0 | 0.98±0.0 | 0.857±0.0 | 0.867±0.0 | 1.559±0.0 |

| 6 | Country/Purpose/State/CityNonCity | msse | 0.95±0.0 | 1.271±0.0 | 1.269±0.0 | 1.033±0.0 | 0.903±0.0 | 0.912±0.0 | 1.635±0.0 |

| 8 | Overall | msse | 0.973±0.0 | 1.492±0.0 | 1.488±0.0 | 1.087±0.0 | 0.951±0.0 | 0.966±0.0 | 1.695±0.0 |

| 1 | Country | scaled_crps | 0.043±0.0009 | 0.048±0.0006 | 0.048±0.0006 | 0.05±0.0006 | 0.051±0.0006 | 0.053±0.0006 | 0.054±0.0009 |

| 3 | Country/Purpose | scaled_crps | 0.077±0.001 | 0.114±0.0003 | 0.112±0.0004 | 0.09±0.0013 | 0.087±0.0009 | 0.089±0.0009 | 0.106±0.0013 |

| 5 | Country/Purpose/State | scaled_crps | 0.165±0.0009 | 0.249±0.0004 | 0.247±0.0004 | 0.18±0.0018 | 0.169±0.0009 | 0.169±0.0008 | 0.231±0.0021 |

| 7 | Country/Purpose/State/CityNonCity | scaled_crps | 0.218±0.0013 | 0.289±0.0004 | 0.286±0.0004 | 0.228±0.0018 | 0.217±0.0013 | 0.218±0.0011 | 0.302±0.0033 |

| 9 | Overall | scaled_crps | 0.193±0.0011 | 0.266±0.0004 | 0.263±0.0004 | 0.205±0.0017 | 0.194±0.0011 | 0.195±0.0009 | 0.268±0.0027 |

References

- Syama Sundar Rangapuram, Lucien D Werner, Konstantinos Benidis, Pedro Mercado, Jan Gasthaus, Tim Januschowski. (2021). “End-to-End Learning of Coherent Probabilistic Forecasts for Hierarchical Time Series”. Proceedings of the 38th International Conference on Machine Learning (ICML).

- Kin G. Olivares, O. Nganba Meetei, Ruijun Ma, Rohan Reddy, Mengfei Cao, Lee Dicker (2022). “Probabilistic Hierarchical Forecasting with Deep Poisson Mixtures”. Submitted to the International Journal Forecasting, Working paper available at arxiv.